VRnture is an immersive virtual reality therapy environment where therapists and patients interact through avatars in a safe virtual space, using real-time body and facial tracking to enhance emotional understanding and deliver more effective, engaging therapy

Wang Bo Angela

Bernard Tan

Belinda Seet

VRnture is an immersive virtual reality therapy platform designed to make mental health support more approachable and engaging. Through By incorporating real-time body and facial tracking, the platform captures non-verbal cues to enhance emotional understanding, while session transcription reduces administrative burden and allows therapists to focus fully on the interaction. Together, these features create a more natural, expressive, and accessible therapy experience that builds trust, improves engagement, and supports better therapeutic outcomes.

Captures real-time movements and facial expressions to accurately reflect users’ emotions within the virtual environment. This enhances non-verbal communication and improves therapists’ understanding of emotional cues.

Allows users to personalise their avatars based on their preferences. This increases comfort, reduces pressure, and encourages more open expression during therapy.

Adapts virtual environments to suit different therapy goals and session needs. This enables controlled, progressive exposure while maintaining user comfort.

Supports real-time interaction between therapist and patient within the virtual space. This ensures accessible and meaningful communication during sessions.

Automatically records and transcribes therapy sessions for later review. This reduces administrative workload and allows therapists to focus fully on patient interaction.

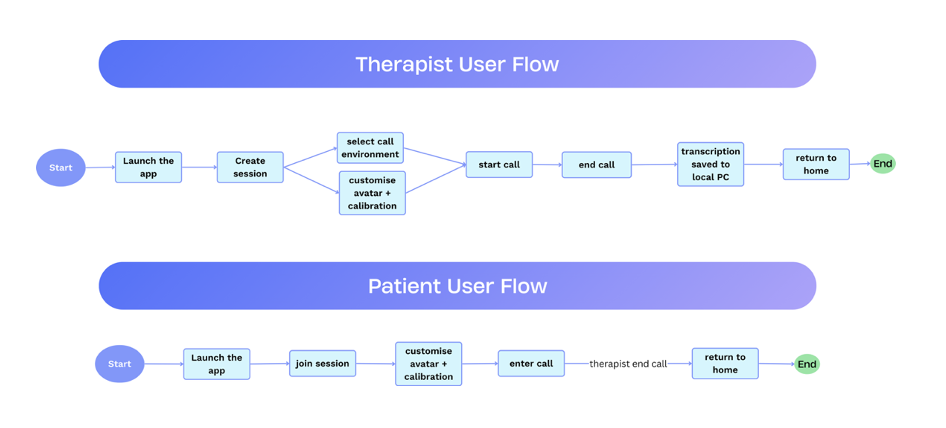

The therapist initiates the process by launching the application and creating a new session. Upon session creation, they are directed to a waiting lobby where they can configure the session by selecting the virtual environment and customising their avatar. During this stage, system calibration for body and facial tracking is performed automatically. Once the patient is ready, the therapist commences the session. Upon completion, a transcription of the session is automatically generated and saved to the therapist’s local device. The therapist may then return to the home interface, concluding the workflow.

The patient begins by launching the application and joining an existing session using the IP address provided by the therapist. They are then placed in a waiting lobby where they can customise their avatar. After completing setup, the patient indicates readiness and waits for the therapist to initiate the session. During the session, the patient interacts with the therapist in real time. Once the therapist ends the session, the patient exits and returns to the home interface.

The platform is developed using Godot 4.6 as the core engine, with Blender used for the creation and modelling of avatars and virtual environments.

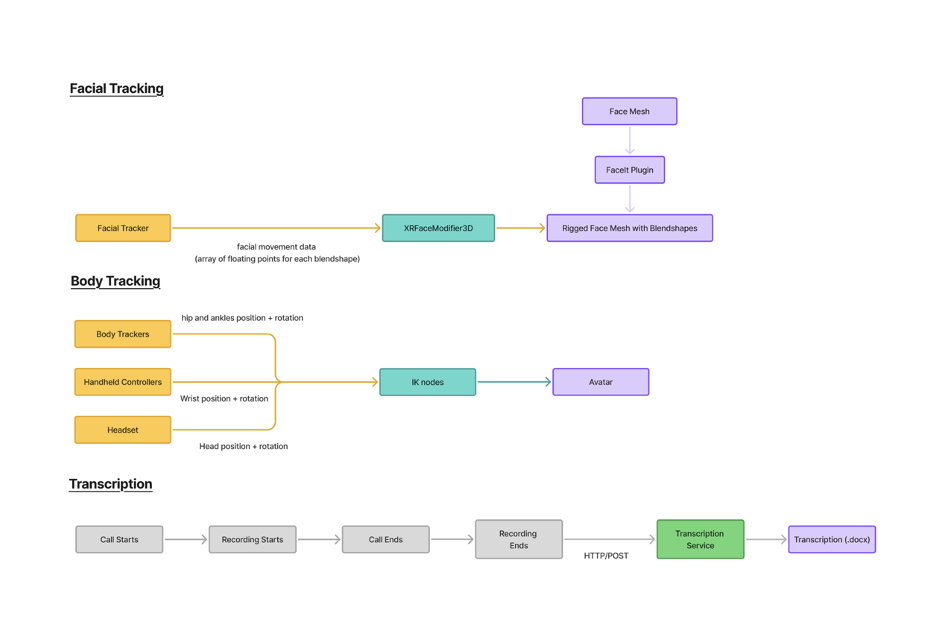

Facial tracking captures real-time facial movement data and maps it onto a rigged avatar using blendshapes. This allows the avatar to accurately reflect user expressions within the virtual environment. It enhances emotional communication by preserving subtle facial cues during interaction.

Body tracking uses inputs from trackers, controllers, and the headset to capture the user’s body position and movements. These inputs are processed through inverse kinematics (IK) to animate the avatar in real time. This enables natural gestures and improves non-verbal communication during sessions.

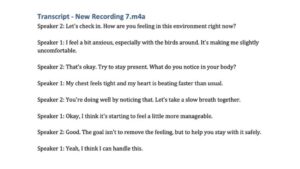

The system records audio during the therapy session and processes it once the session ends. The recording is sent to a transcription service via HTTP to generate a text version of the conversation. This transcript is then saved for later review and documentation.

Capstone S17 would like to express our sincere gratitude to our Capstone instructors, Dr. Wang Bo and Prof. Edwin Koh, for their invaluable guidance, insightful feedback, and continuous support throughout the development of this project. Their expertise and encouragement were instrumental in shaping the direction and success of VRnture.

We would also like to extend our appreciation to the Klass Engineering team, Jay Yeo and Nicholas Chan, for their technical support and collaboration. Their contributions played a key role in enabling the implementation and refinement of our system.

Finally, we are grateful to everyone who supported us along the way and contributed to making this project a meaningful and rewarding experience.

At Singapore University of Technology and Design (SUTD), we believe that the power of design roots from the understanding of human experiences and needs, to create for innovation that enhances and transforms the way we live. This is why we develop a multi-disciplinary curriculum delivered v ia a hands-on, collaborative learning pedagogy and environment that concludes in a Capstone project.

The Capstone project is a collaboration between companies and senior-year students. Students of different majors come together to work in teams and contribute their technology and design expertise to solve real-world challenges faced by companies. The Capstone project will culminate with a design showcase, unveiling the innovative solutions from the graduating cohort.

The Capstone Design Showcase is held annually to celebrate the success of our graduating students and their enthralling multi-disciplinary projects they have developed.