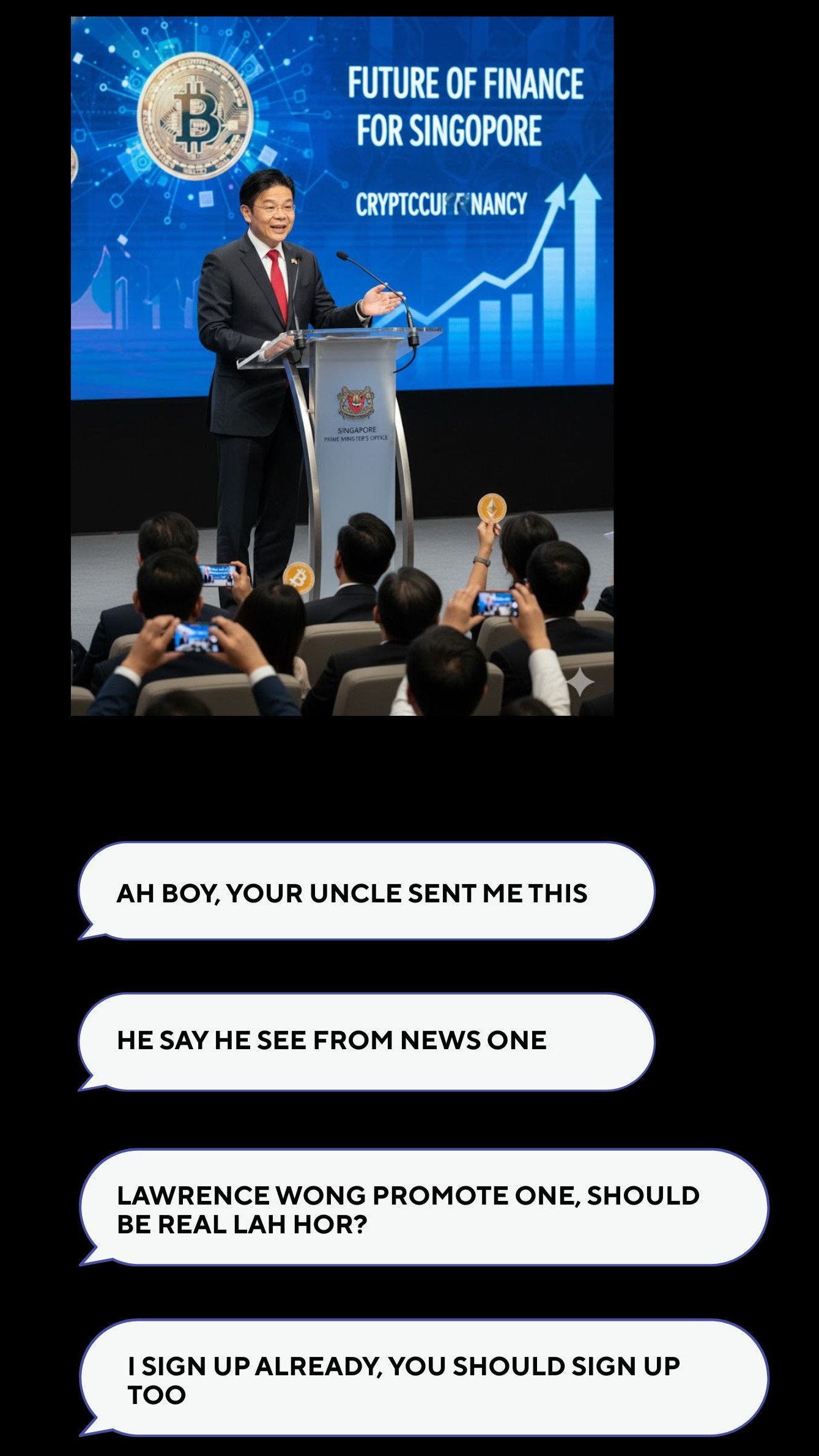

You receive a message from a family member about a new cryptocurrency opportunity.

You’re skeptical at first.

But a government figure is mentioned.

The source seems legitimate.

The website looks real.

It feels safe,

so you sign up.

You enter your credit card details.

And then you realise ...

You've been scammed.

In today’s world, even messages from trusted sources with reputable names and convincing websites, may not be legitimate.

With AI-generated content, deepfakes, and increasingly sophisticated scams, it’s becoming harder than ever to tell what’s real.

If only there were a tool that could verify opportunities before committing ...

Introducing ...

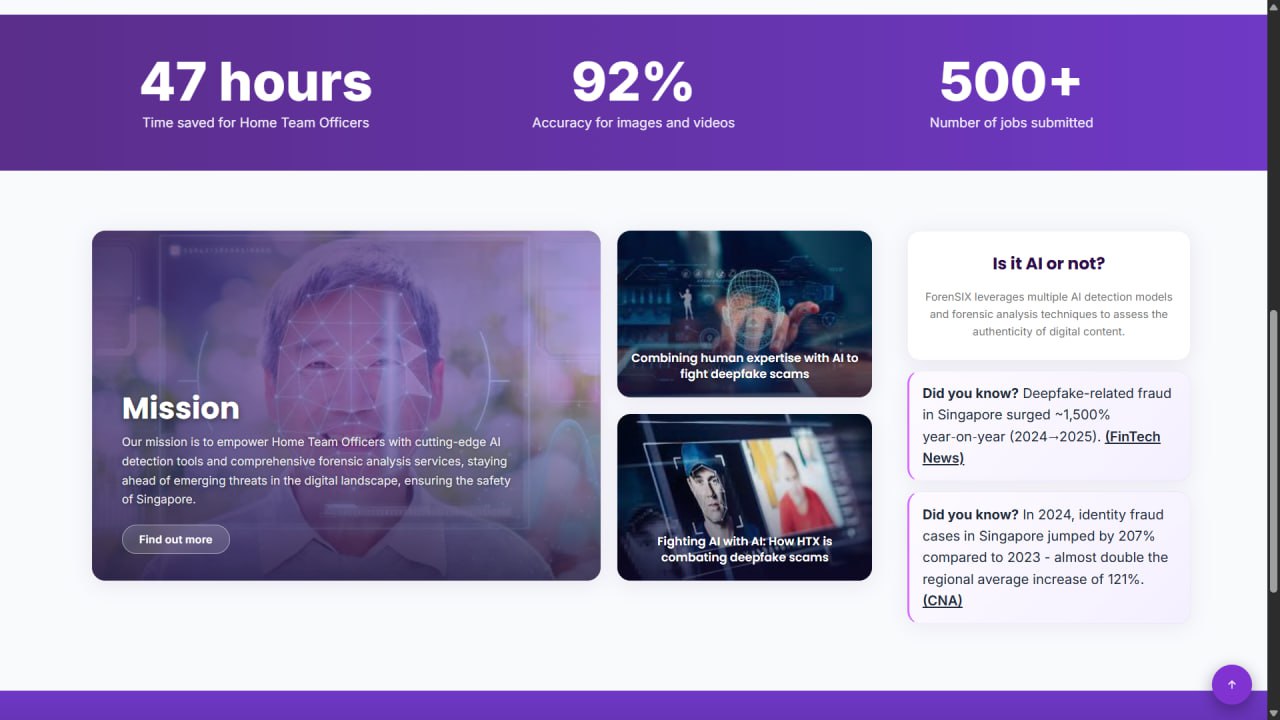

A state of the art deepfake detection software

Built against AI, with AI

ForenSIX's Capabilities

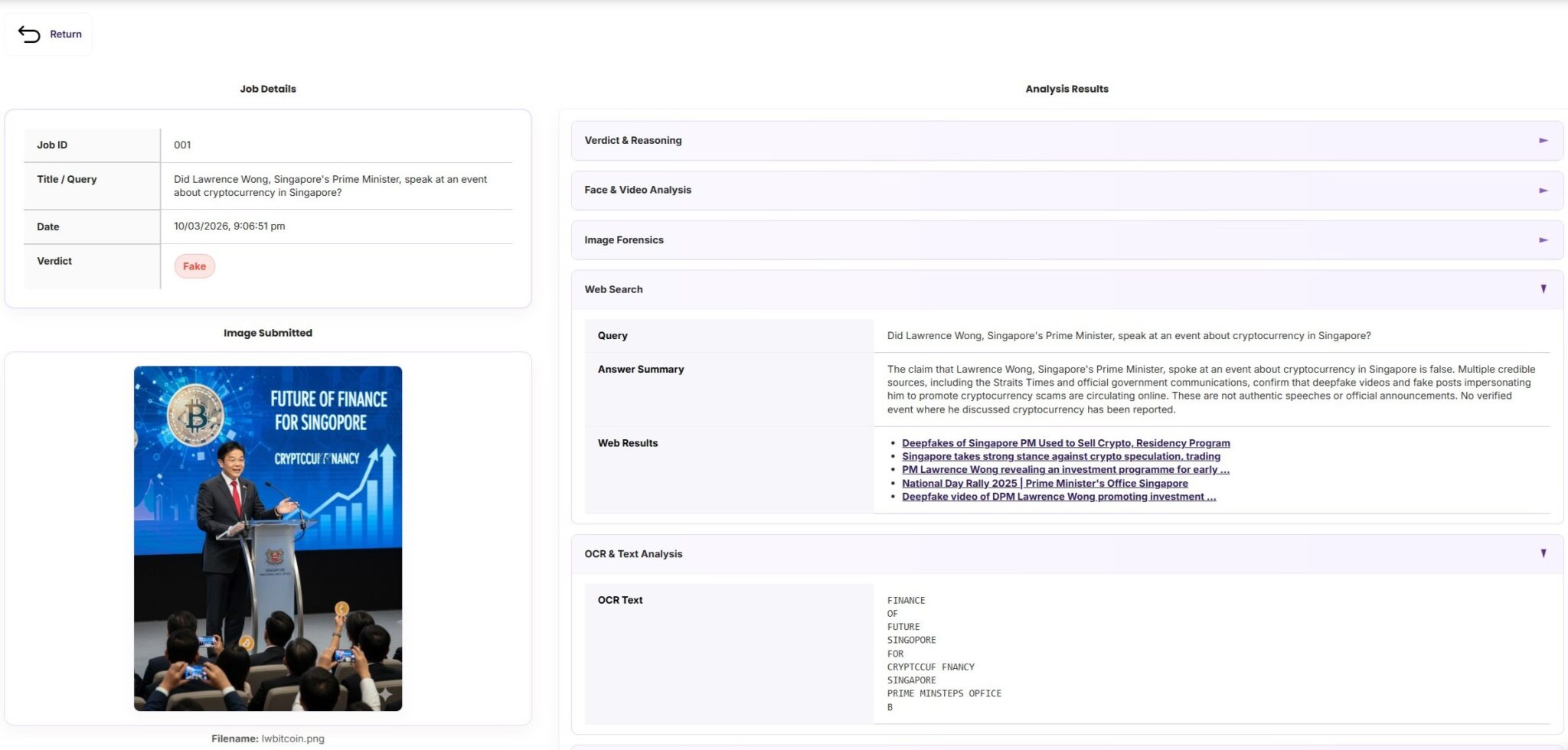

User Input Page

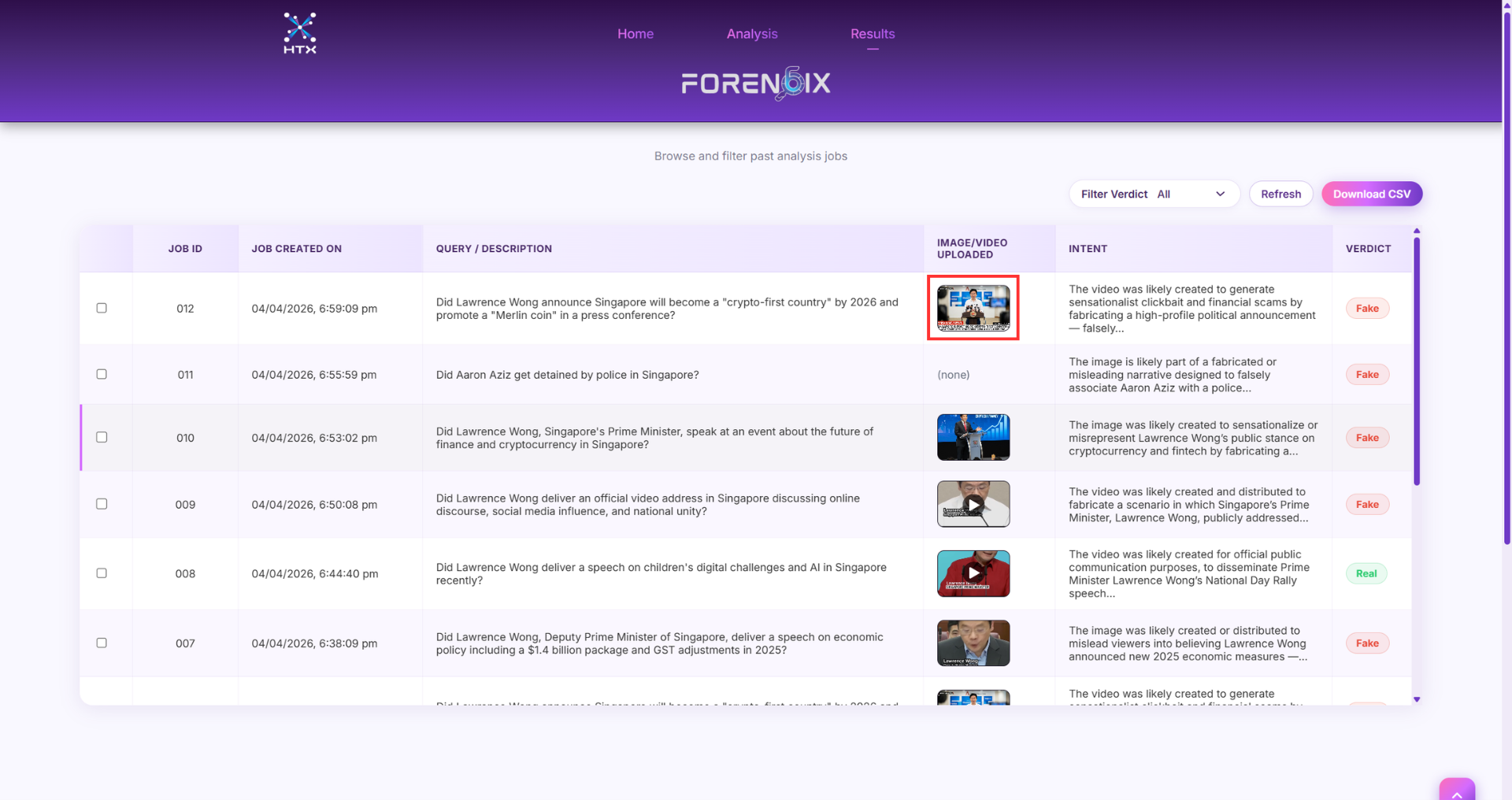

Where users upload images, videos, text, or URLs they want to analyse for deepfake and misinformation.

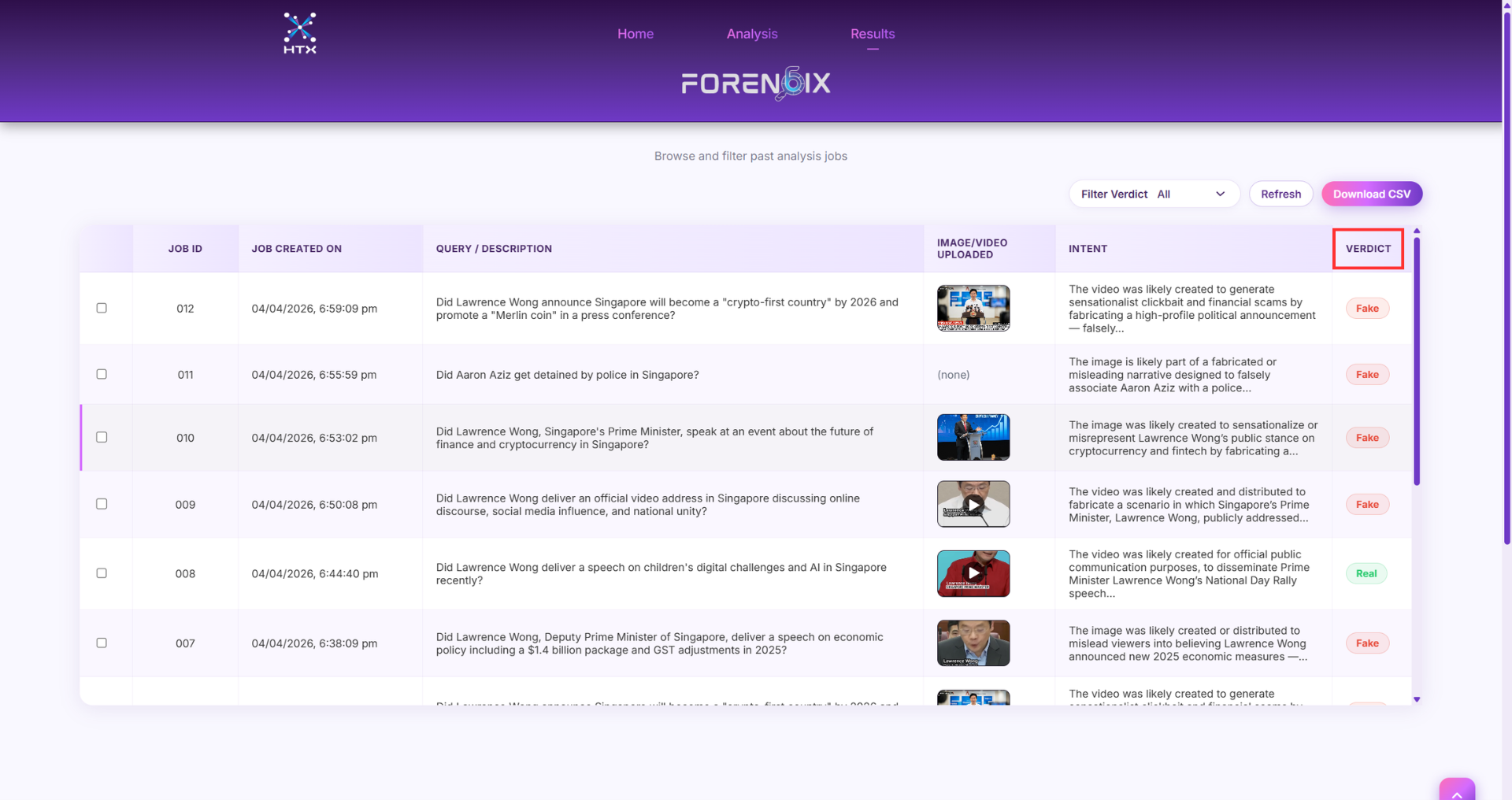

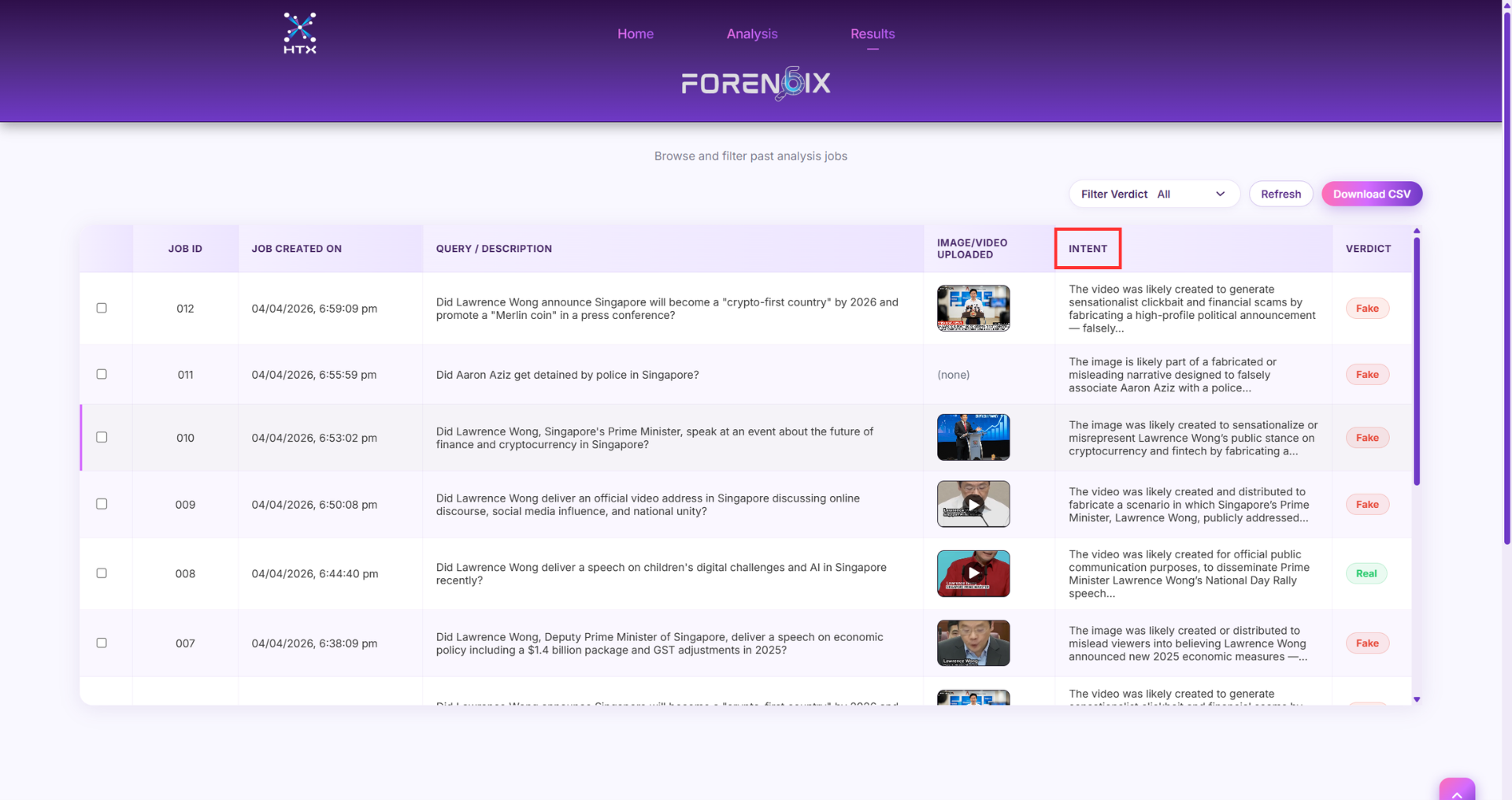

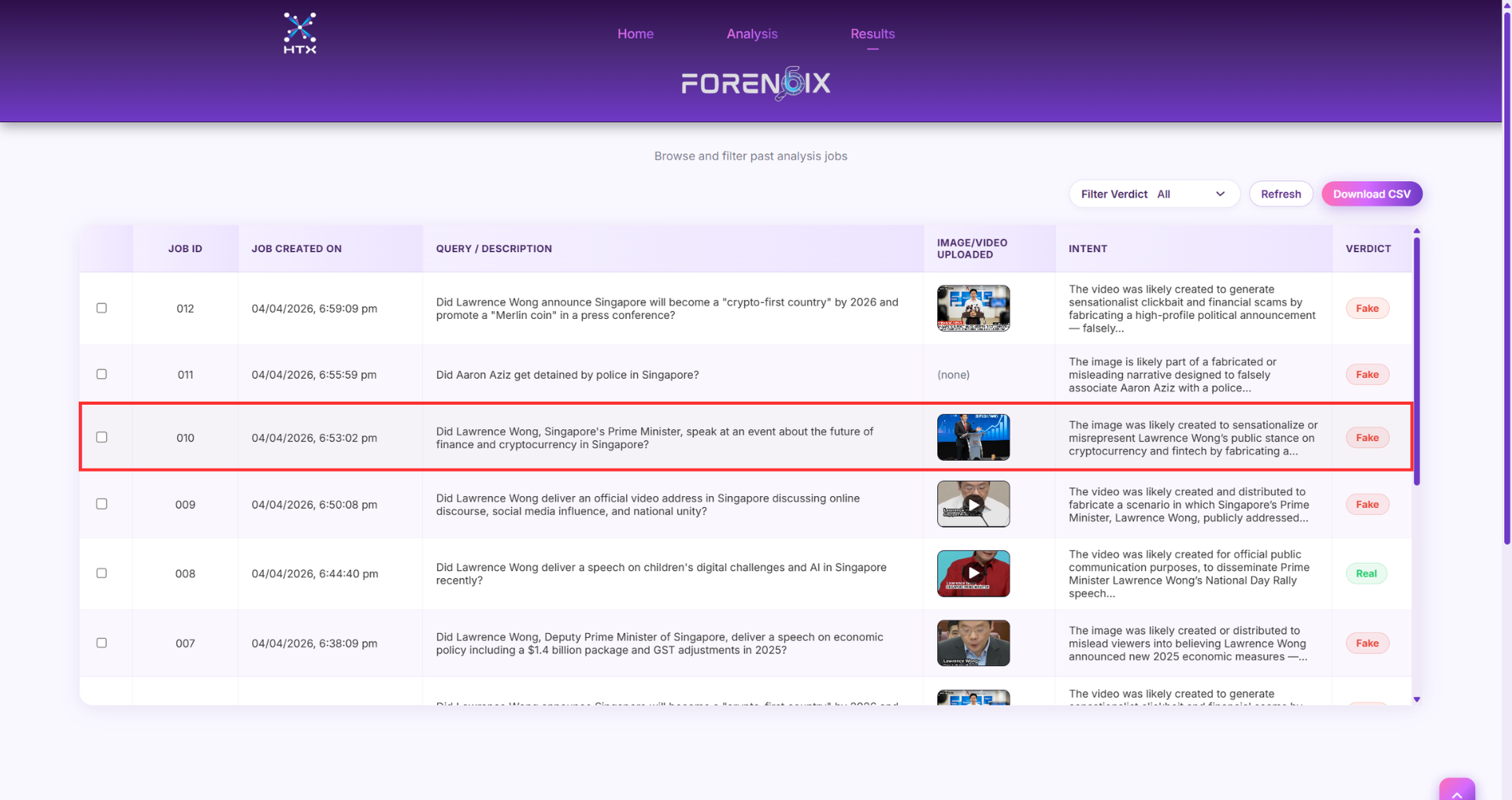

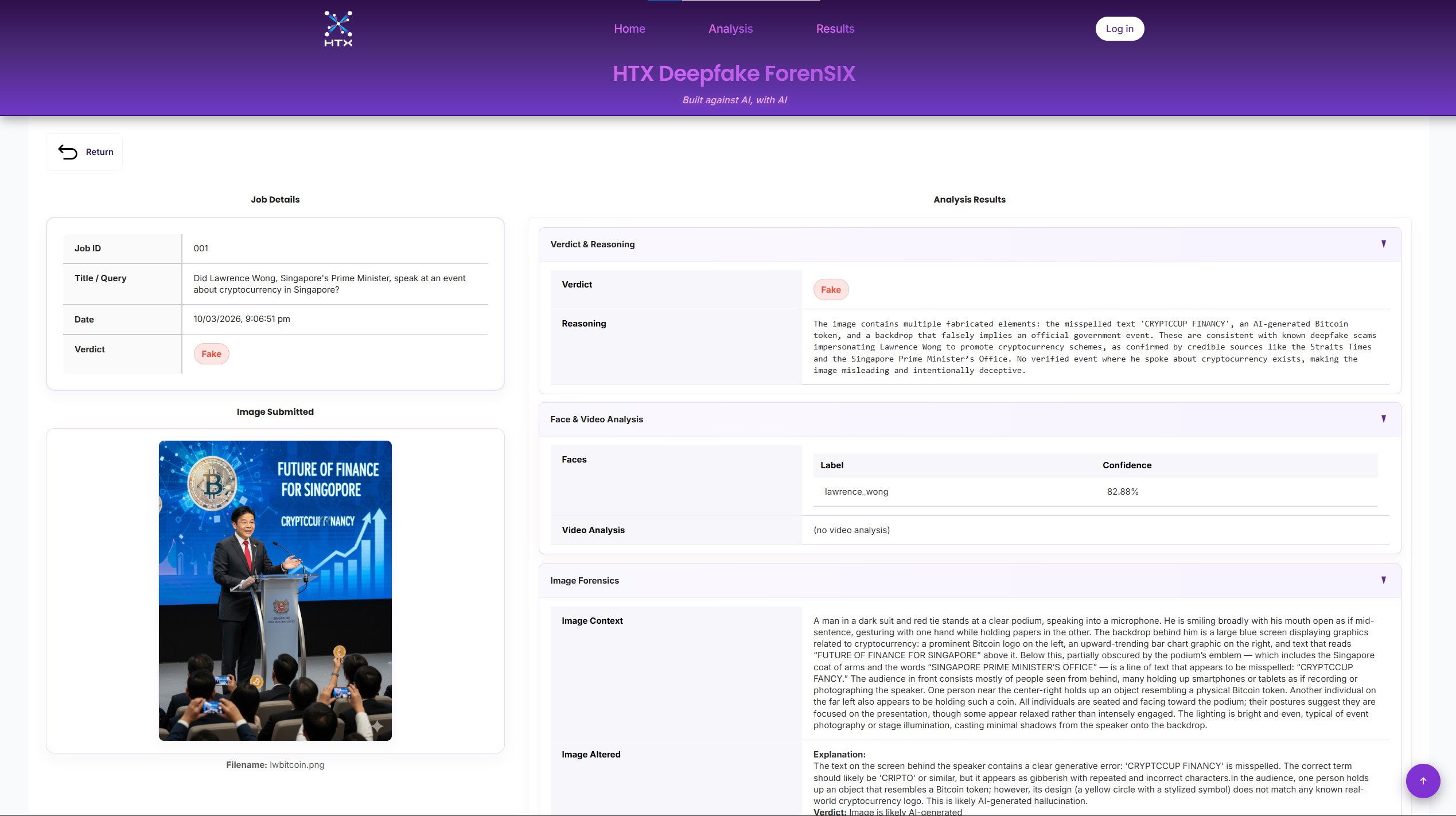

Analysis Page

Where users view a detailed analysis of the content uploaded. It consists of Verdict & Reasoning, Face & Video Analysis, Image Forensics, Web Search, and OCR & Text Analysis.

Click on the dots to find out more.

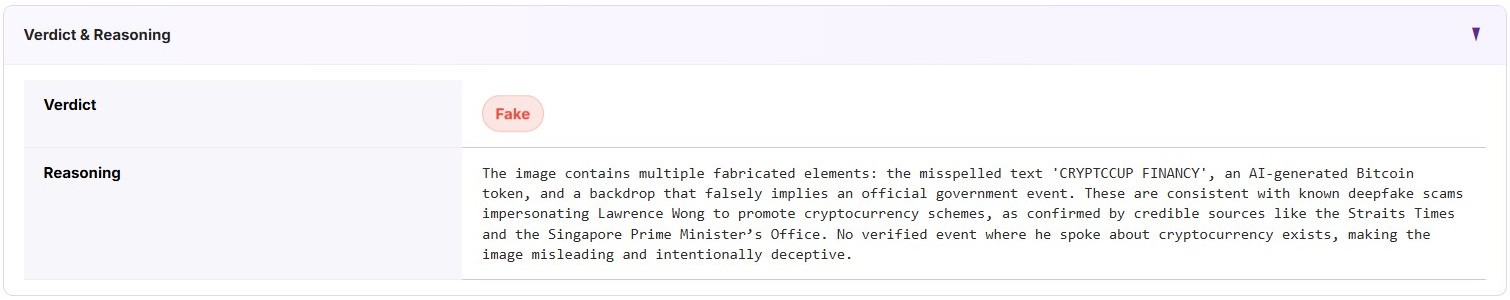

Verdict represents the LLM’s classification of the input content as Real or Fake, indicating whether the information is deemed truthful or misleading.

Reasoning provides the explanation behind the Verdict, based on the results of relevant forensics and analysis methods used (Image Forensics, Video Forensics, Domain Investigation, Web Crawling, Web Search).

Face Recognition analyses facial features in an image or video to verify a person’s identity and detect inconsistencies. By comparing facial patterns across frames or with known images, it helps identify signs of manipulation that may indicate the media is fake.

Video Analysis examines visual and temporal patterns within a video to detect signs of manipulation or synthetic generation. It analyses elements such as frame consistency, motion patterns, lighting, and visual artefacts to identify anomalies that may indicate the video has been altered or fabricated.

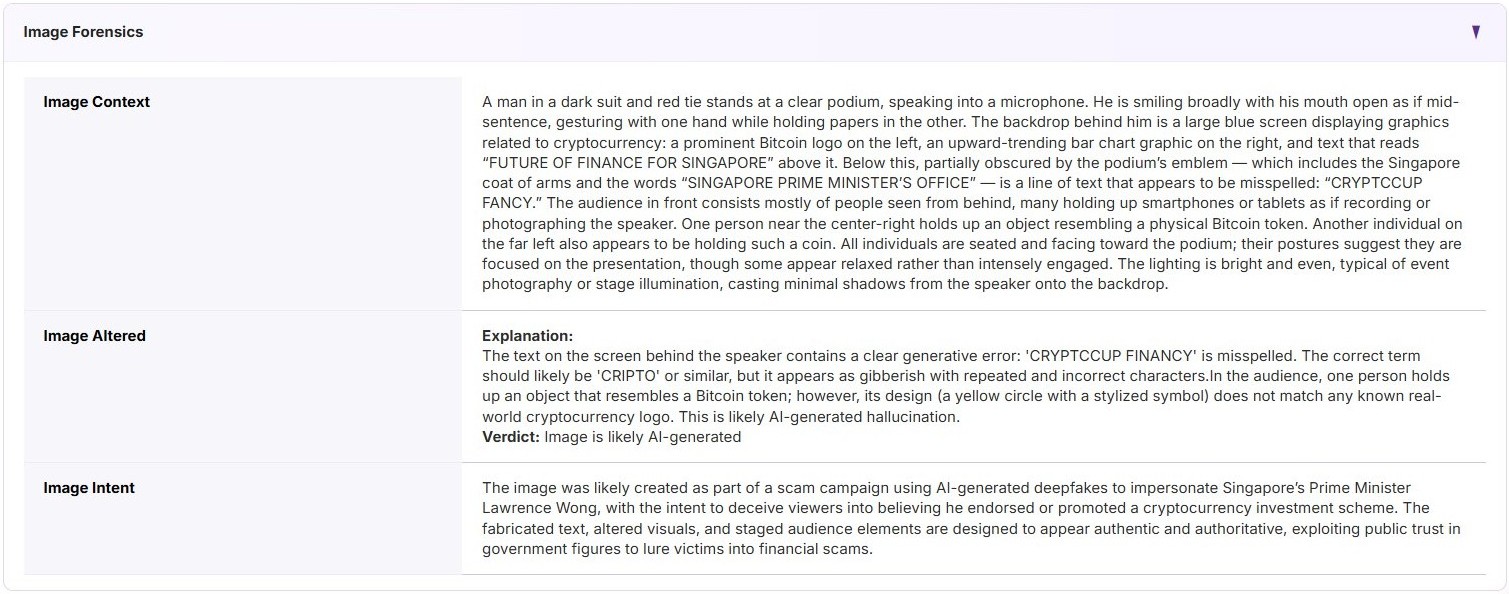

Image Context is the LLM’s description of the visual content it interprets from the input image.

Image Altered indicates whether the input image appears to have been modified or manipulated, based on detected visual inconsistencies or signs of editing.

Image Intent describes the likely purpose or message conveyed by the image, based on the visual elements and context interpreted by the LLM.

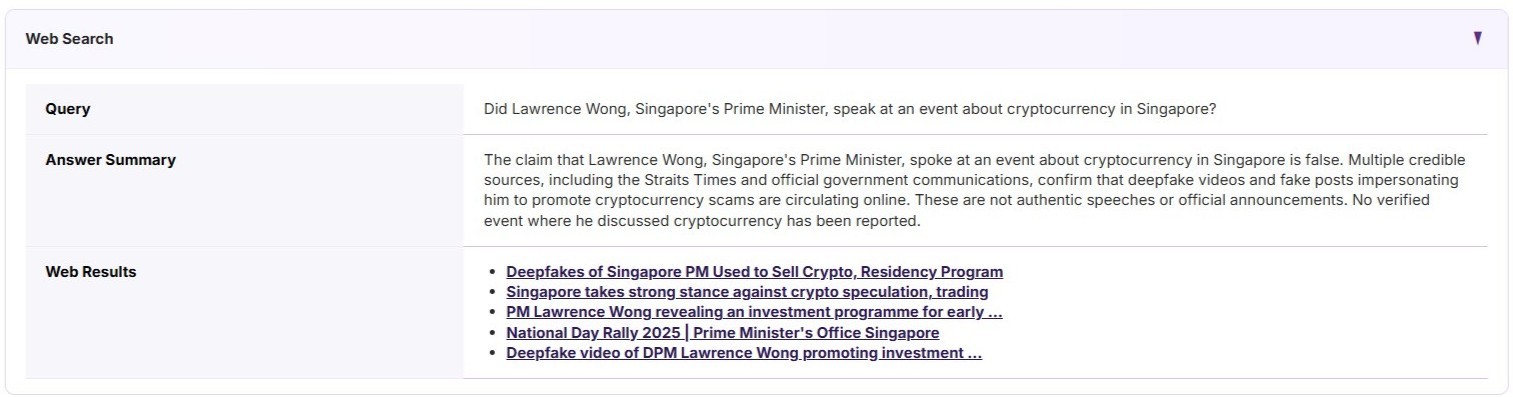

The LLM generates a Query based on information gathered by earlier analyses, to check if the narrative of the input content is factual by querying the web.

From the Web Results, the LLM determines whether the input content is true or false, and explains why with an Answer Summary.

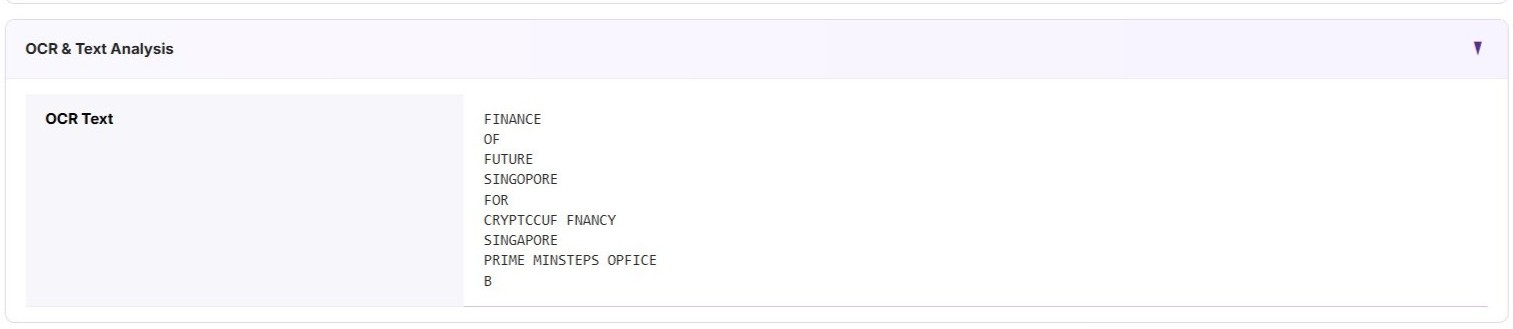

OCR extracts and converts any text found within the input image into machine-readable text, allowing the system to analyse written content present in the image.

It helps determine if an image is real or fake by analysing the extracted text for inconsistencies, manipulations, or misleading content.

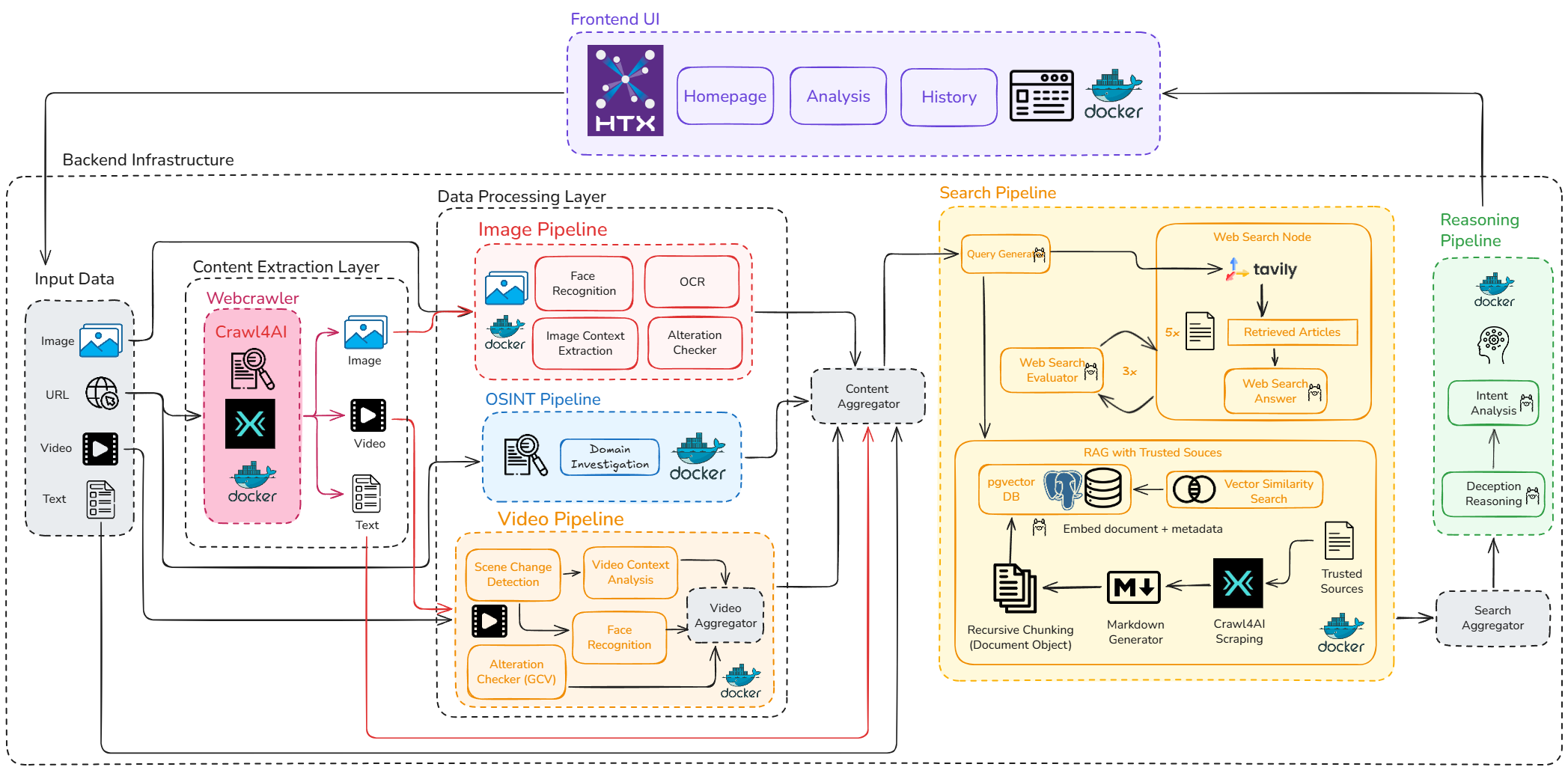

Web Crawling

Web Crawling

Domain Investigation

Domain Investigation

Image Forensics

Image Forensics

Video Forensics

Video Forensics

Web Search

Web Search (Tavily)

Reasoning

Reasoning (LLM)