Proj 09 – IFX – GenAI Powered Infineon Technical Assistant

AI-Powered Technical Assistant for Infineon Developers

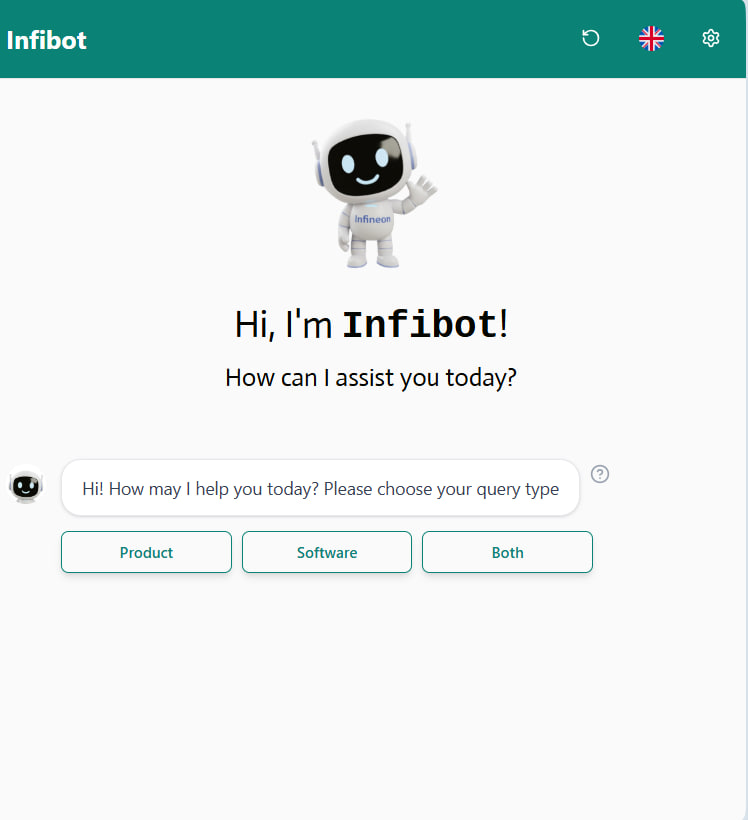

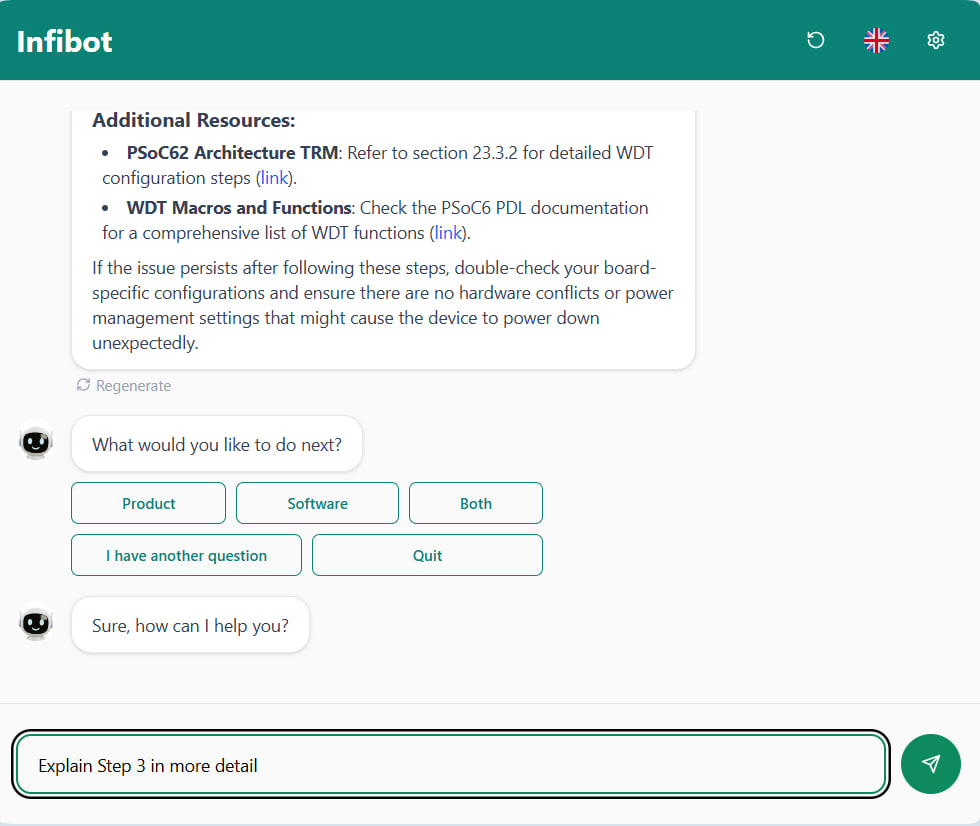

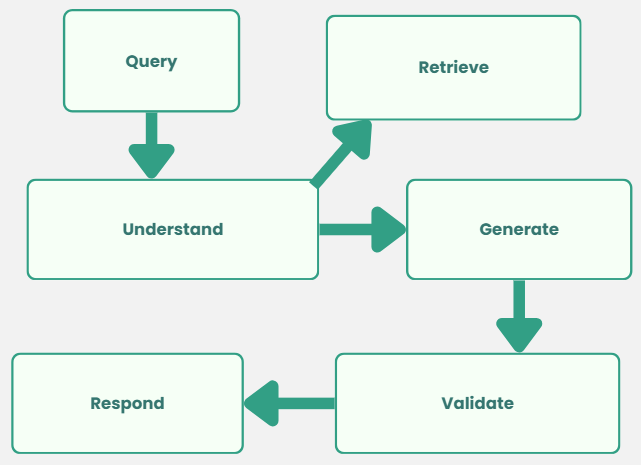

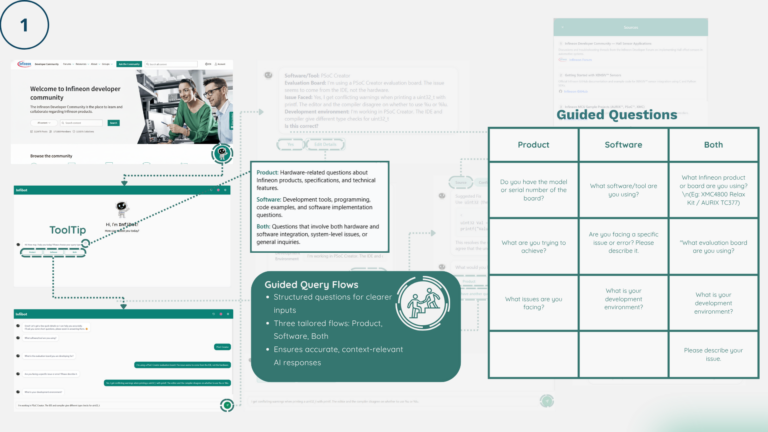

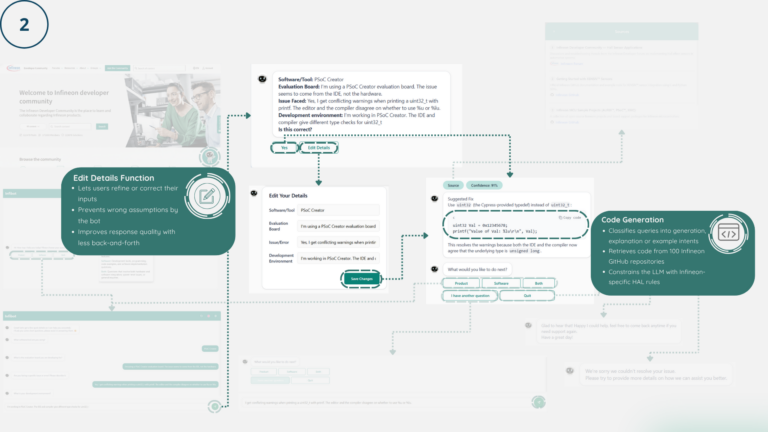

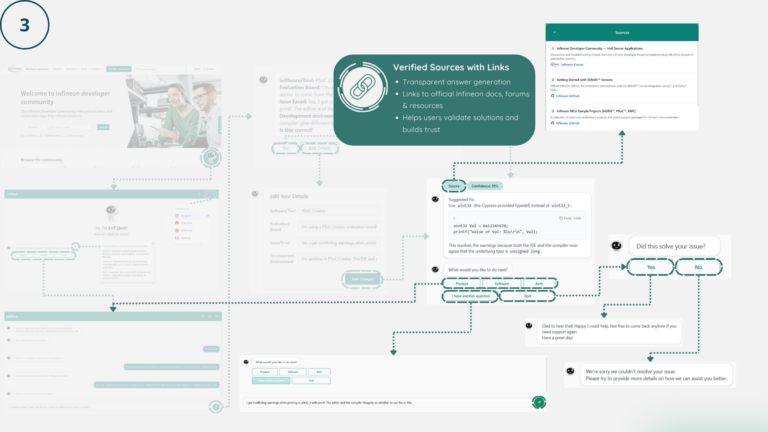

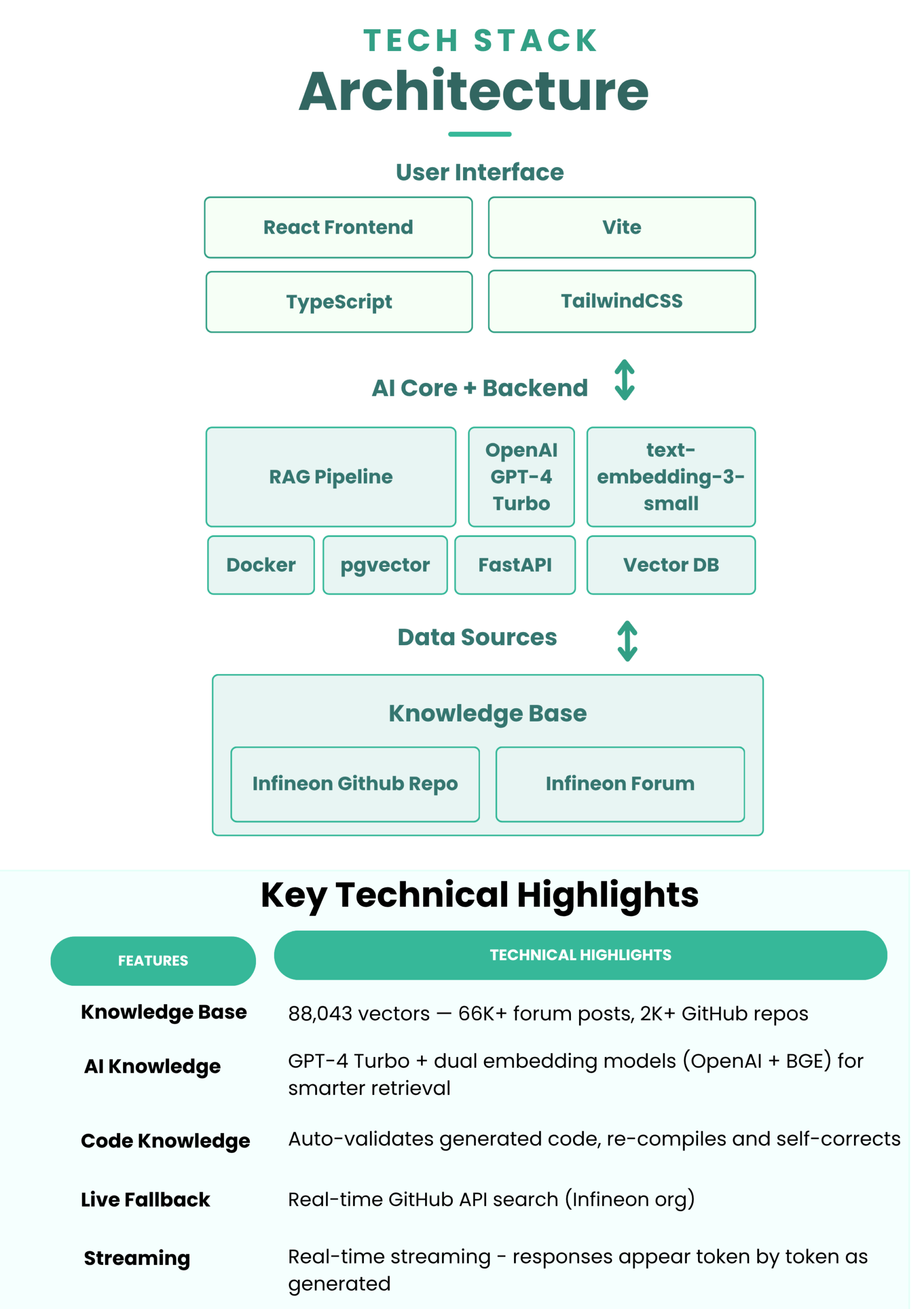

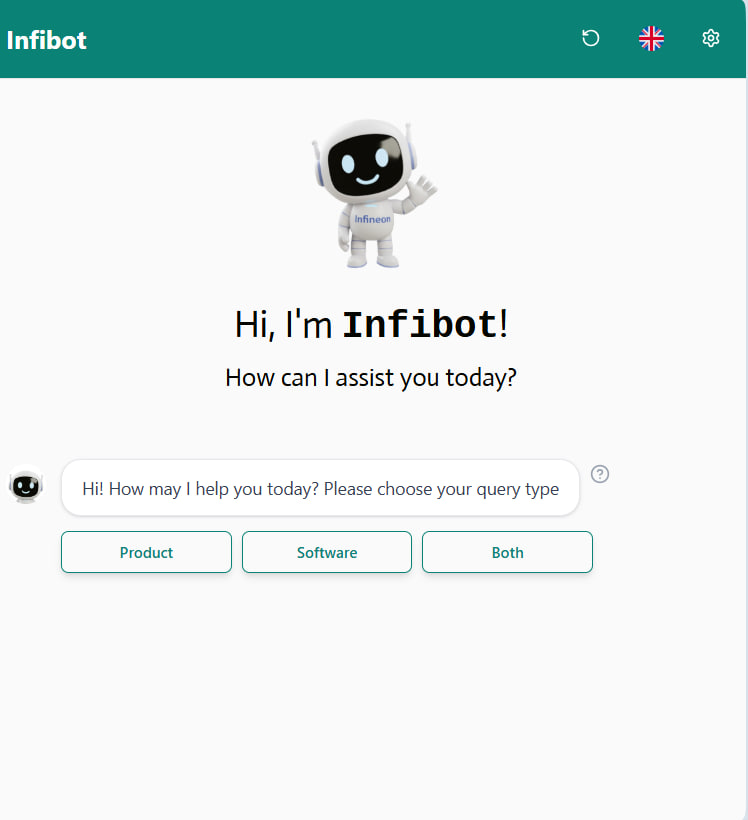

Infibot is a generative AI‑powered technical chatbot designed to support developers working with Infineon products.

It consolidates community forums and code libraries into a unified retrieval system and delivers code and context‑aware assistance, reducing troubleshooting time, while ensuring responses remain accurate and grounded in verified Infineon sources.

Introducing Proj 09 – IFX – GenAI Powered Infineon Technical Assistant

Developers working with Infineon products often waste valuable time hunting through scattered forums and documentation just to solve a single technical issue. Infibot changes that.

By consolidating community knowledge and code libraries into a unified AI-powered assistant, Infibot delivers fast, accurate, context-aware answers, grounded in verified Infineon sources.

Team members

Chang Wei Zher, Nicklas (ESD), Muhammad Irfan Bin Djuanda (ESD), Ang Li En Eldrick (ISTD), Mohamed Ammar Bin Mohamed Yusri (DAI), Shah Hetavi Hardik (ISTD), Pham Hong Quan (ISTD), Ng Junhao Marcus (ISTD)

Instructors:

-

Mahamarakkalage Dileepa Yasas Fernando

Writing Instructors:

-

Belinda Seet