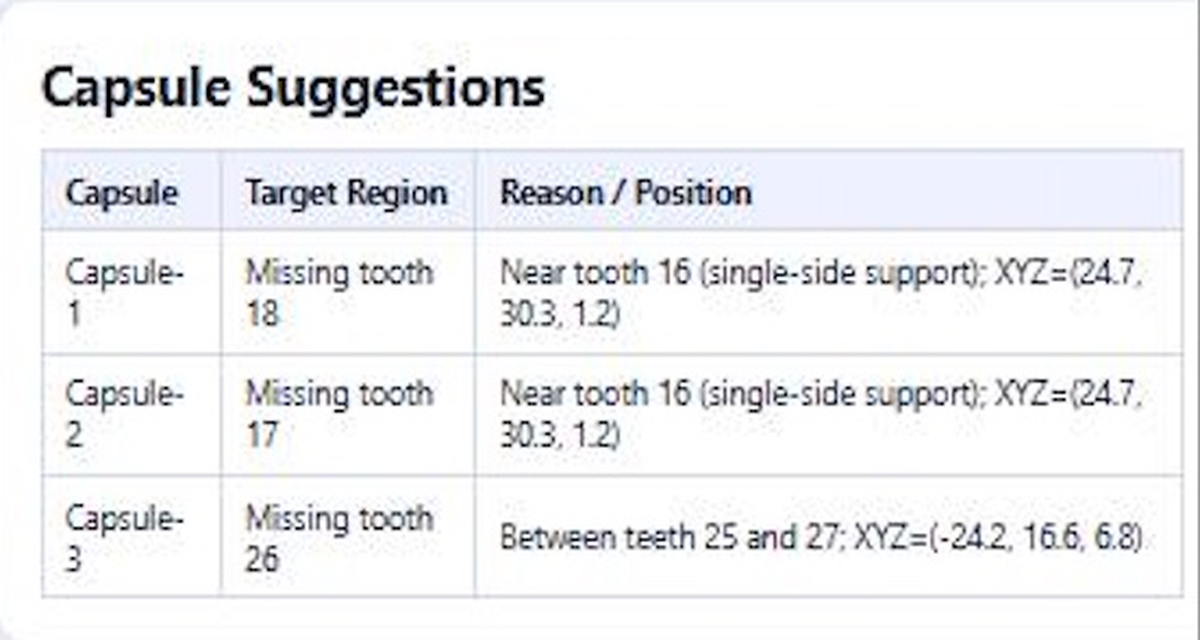

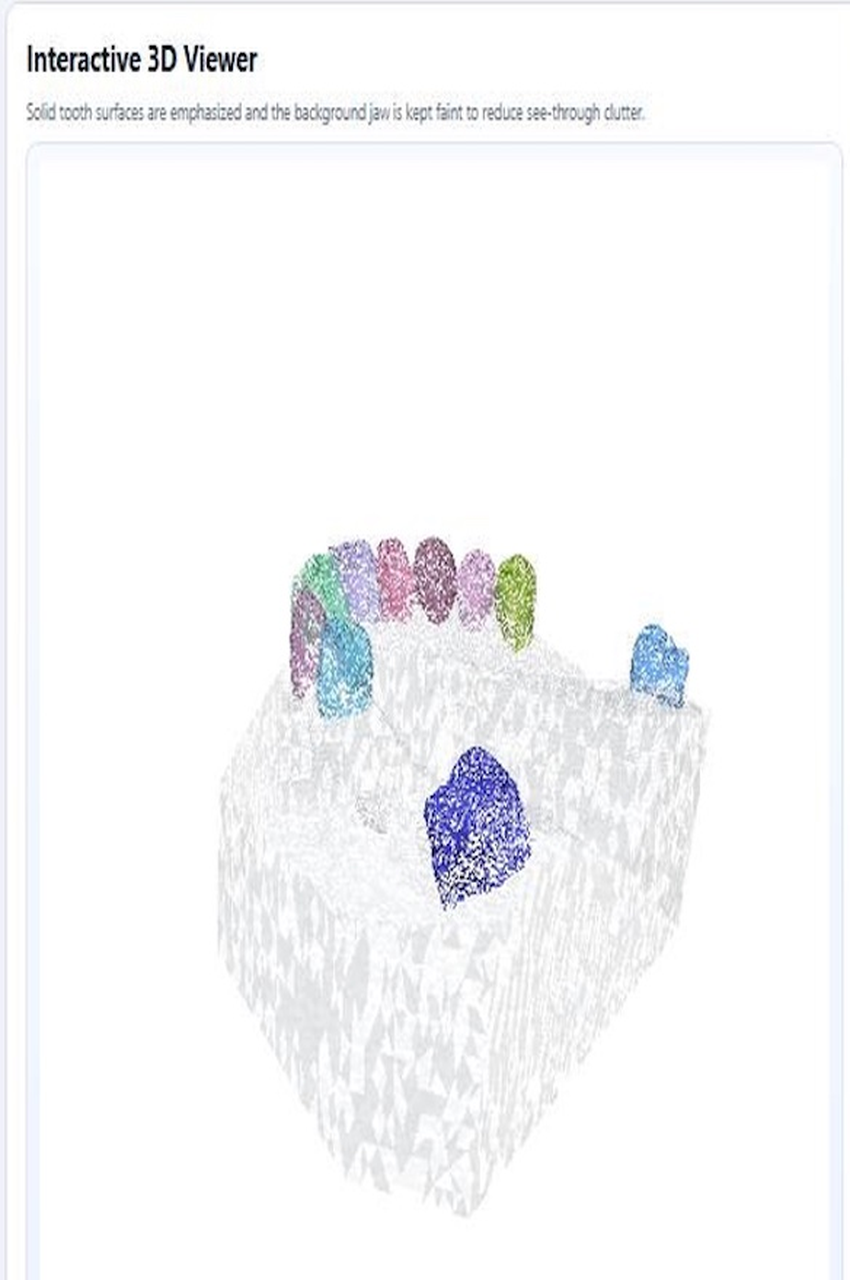

The platform allows users to upload and analyse 3D dental scans in .obj format. Once a scan is uploaded, a trained AI model predicts tooth labels, and the predictions are grouped into individual teeth. Each tooth is assigned an FDI tooth number, and its vertex count is used to classify it as present or missing. The results are displayed as a color-coded segmented scan in an interactive 3D viewer, alongside a tooth-by-tooth summary. From this, the platform identifies present and missing teeth and suggests possible capsule placement areas.

The selected model is DentiSeg Pro, a fine-tuned version of TSegNet [ToothGroupNetwork by Ho Yeon Lim and Min Chang Kim — https://github.com/limhoyeon/ToothGroupNetwork], adapted for improved performance on dental scans with multiple missing teeth. Among the three models evaluated — TSegNet (baseline), DentiSeg Plus (intermediate improved model), and DentiSeg Pro (final fine-tuned model), DentiSeg Pro achieved the best performance on the Contoral benchmark.

Rather than training from scratch, DentiSeg Pro was fine-tuned from the DentiSeg Plus checkpoint using a dataset of 72 jaw scans, all of which were partial-edentulism cases, with 71 of the 72 scans containing three or more missing teeth. This focused the fine-tuning specifically on challenging missing-tooth scenarios, which was one of the team’s main technical contributions.

It uses a two-stage design made up of Farthest Point Sampling (FPS) and Boundary-Detail Learning (BDL). FPS captures the overall structure of the full dental scan, while BDL refines local tooth boundaries in more difficult regions. This helps the model handle complex scans more effectively, particularly those involving multiple missing teeth.

After segmentation, the platform displays the FDI tooth number, vertex count, and presence status for each expected tooth. A threshold of 500 vertices is used to determine whether a tooth is classified as present or missing.

For evaluation, the team manually assessed each company-provided test scan to record which teeth were present or missing as ground-truth data. The model outputs were then compared against this ground-truth on a tooth-by-tooth basis. The evaluation focuses on present-or-missing tooth detection rather than exact segmentation boundary quality.