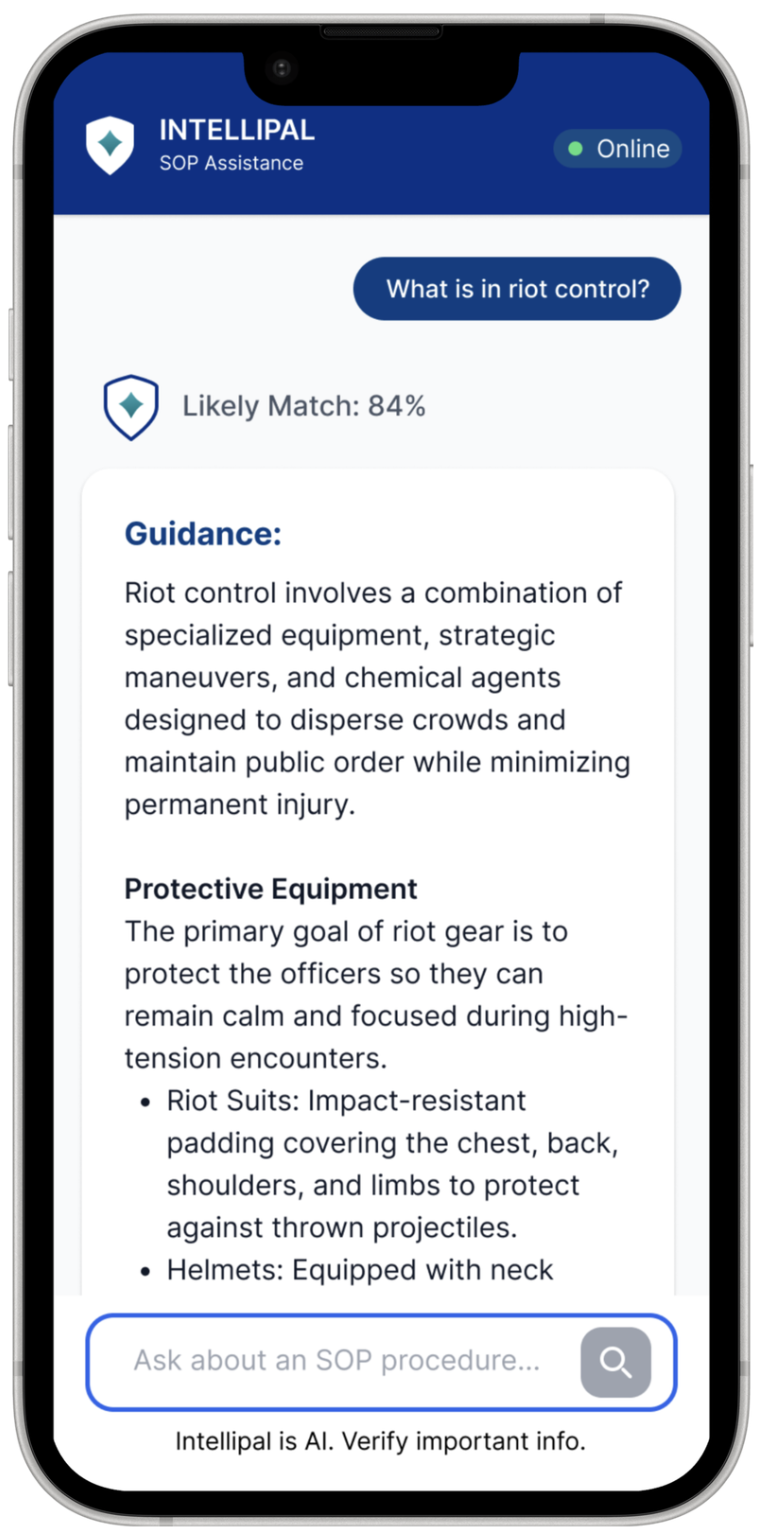

Imagine yourself as a rookie officer..

Let's Identify the Problem Statement

Ground Response Force officers (GRF) currently lack a reliable method to access critical Standard Operating Procedures (SOPs) and legal reference materials while deployed in the field.

The existing knowledge base is hindered by keyword-dependent search mechanisms and a reliance on stable internet connectivity, which is often unavailable in operational “blackspots”.

Introducing..

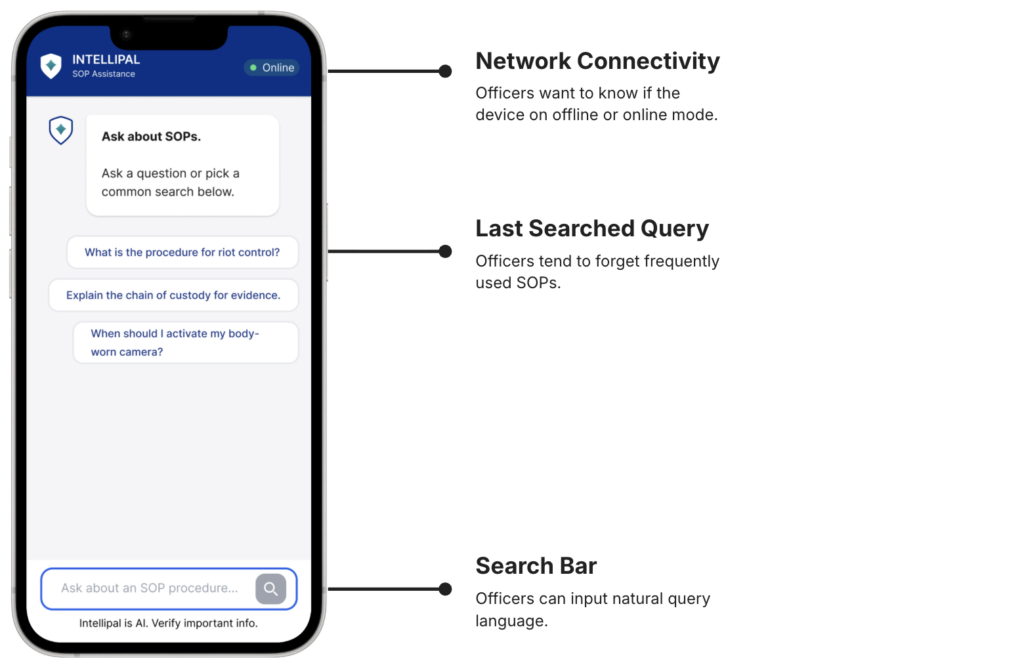

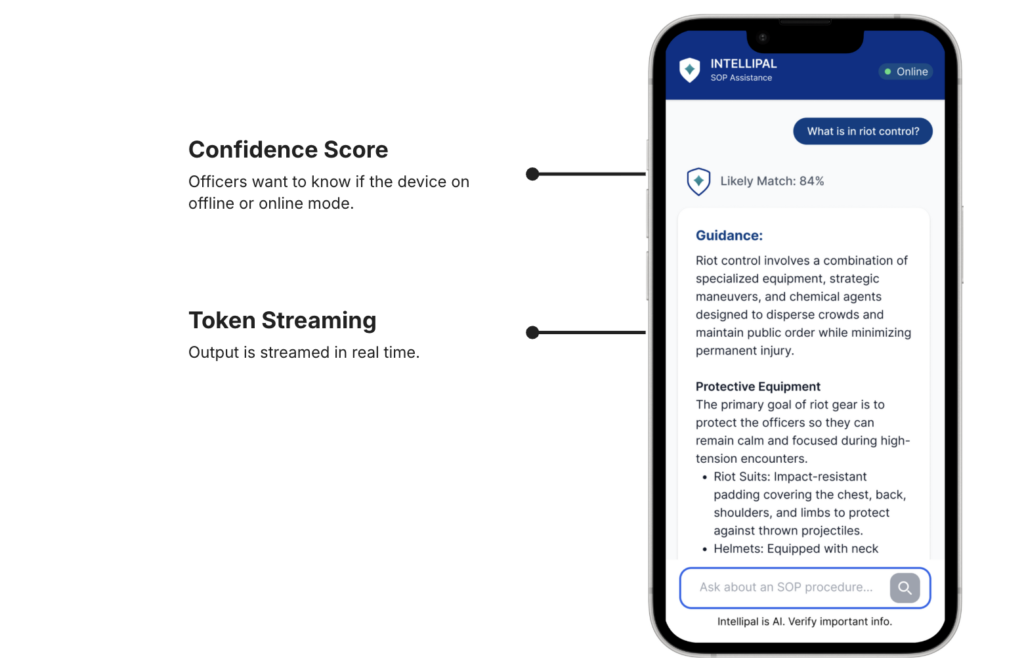

Take a Closer Look on Our Product

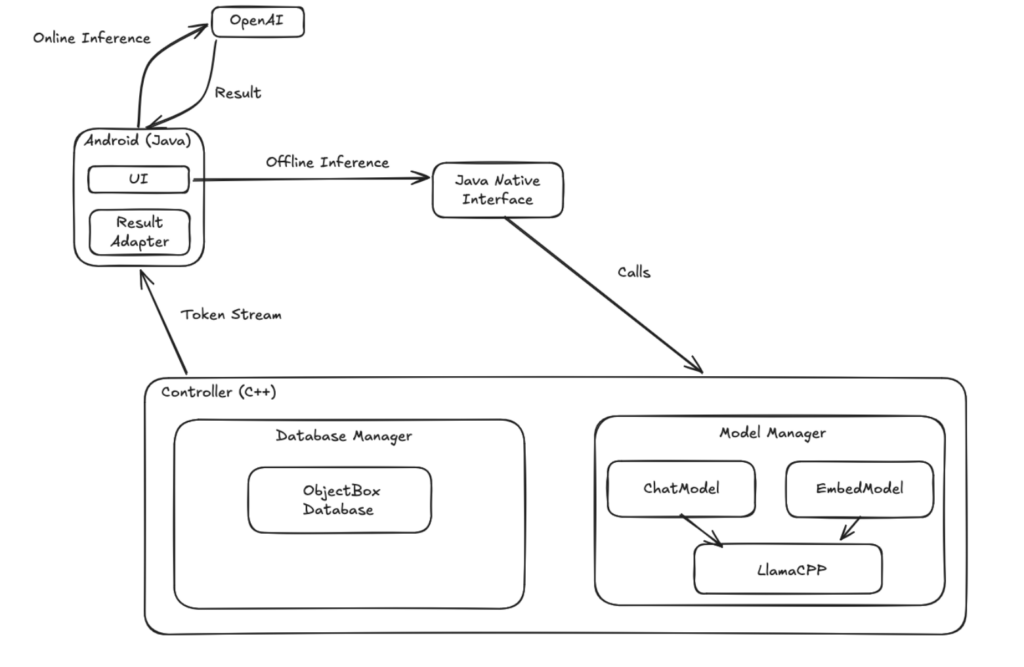

System Architecture

Product Evaluation

96% ↓

Time to Reach Target Content

6.3s → 0.9s

Time to Retrieve Confidence Score

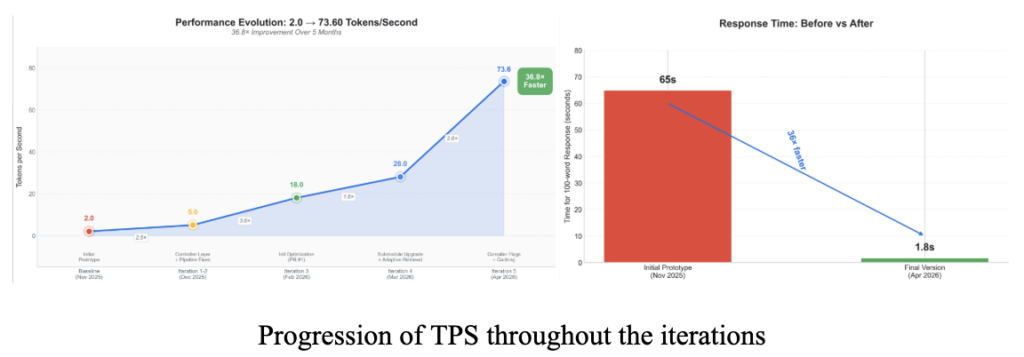

36x

System Throughput Increase

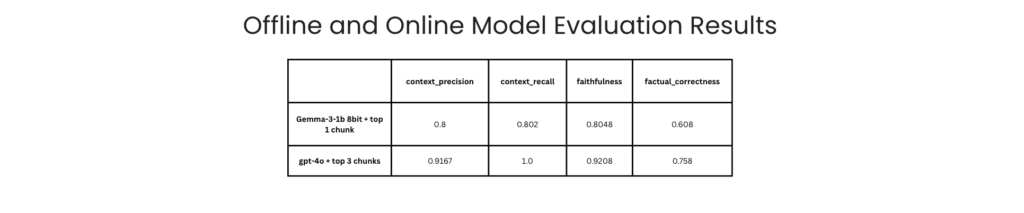

Our evaluation proves that the system is fast, reliable, and ready for frontline use after several technical improvements. We rigorously tested the prototype using four specific metrics: Faithfulness, Answer Relevance, Context Precision, and Context Recall. The results show that our search pipeline is highly accurate, consistently finding the right documents without making up false information.

We learned that while shrinking the model and compressing the text speeds things up, it can hurt the factual correctness of the final answers. To solve this, we found that using 4-bit or 8-bit models with strict noise filtering provides the best balance between speed and truth. Through four major updates, we massively improved the overall generation speed, jumping from 2 to 73 tokens per second and dropping the initial wait time to just 1.8 seconds. Combined with faster database search methods like partial loading, the final system successfully delivers instant, trustworthy guidance to officers directly on their devices.

Impacts

Economic

Economic Impact

Social

Social Impact

Environment

Environment Impact

Users Feedback

The app is quite easy to use and intuitive. Would have loved to have something like this in my starting days, so don't need bother supervisor as much

This app can be quite helpful to new joinees while on the way to the site.

This can be resourceful for times we face connectivity blackspots like in mall basements or carparks.