Qin Yanxia

Belinda Seet

Understanding how consumers wash their hair is important for improving hair care products. Studying real shower routines allows researchers to analyse how people interact with shampoo, conditioner, and other products.

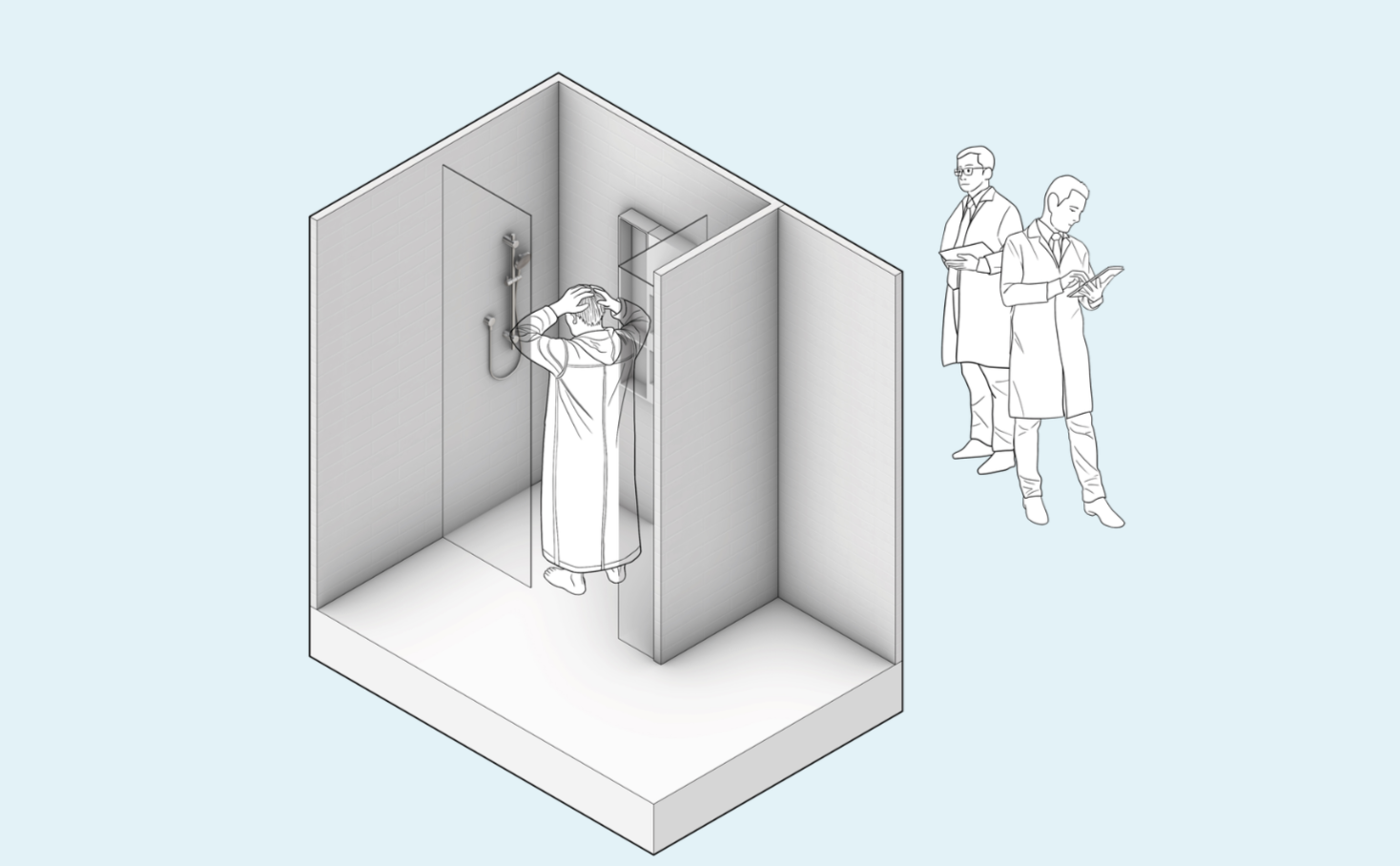

Traditional shower studies rely on direct observation or video recording, where participants are aware they are being monitored. While effective, these methods can be time-consuming and limit the depth and scalability of behavioural insights.

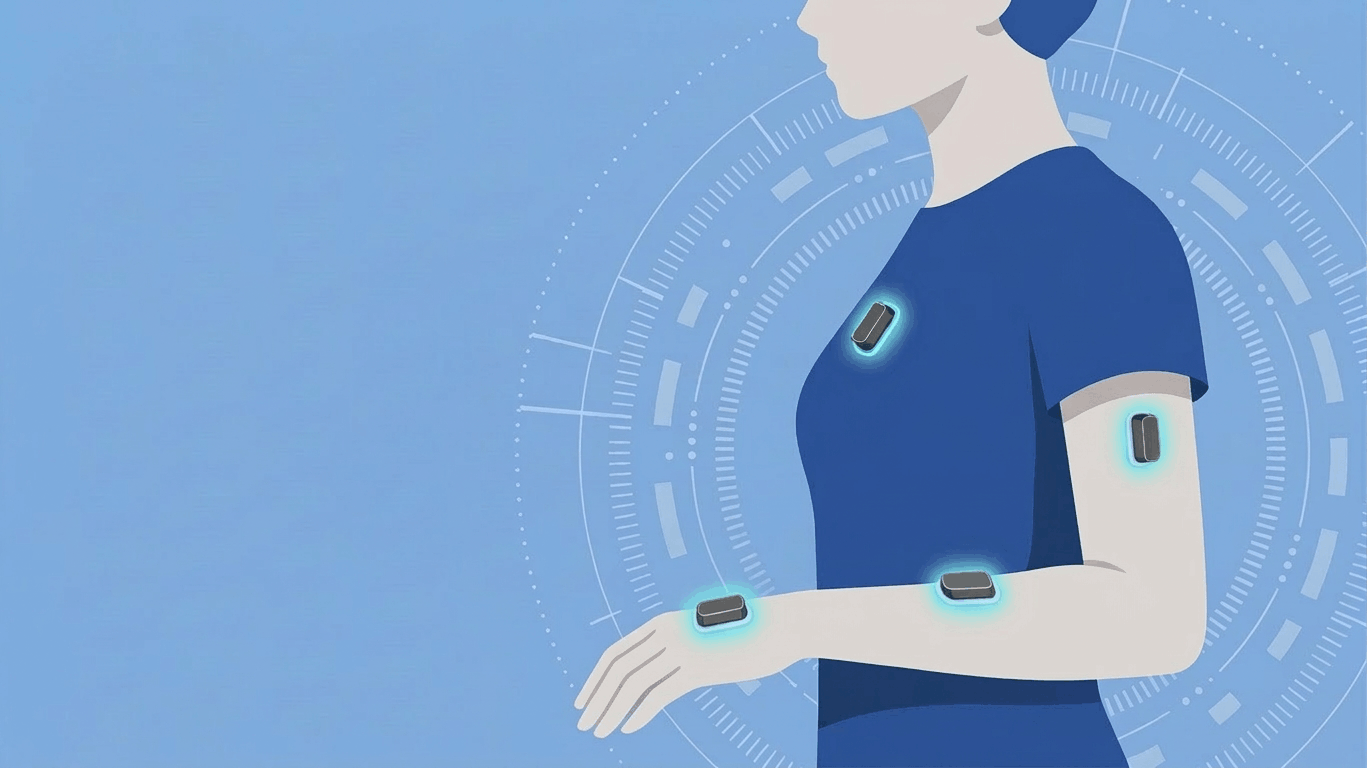

ShowerSense captures motion data instead of video. Wearable sensors record upper-limb movements during shower routines, which are reconstructed through a digital avatar. This allows researchers to analyse behaviour while protecting participant privacy.

Improving hair care products requires understanding how people wash their hair in real-world routines.

Traditional methods rely on direct observation or video recording. However, when participants know they are being observed, their behaviour may change, leading to less accurate insights.

ShowerSense addresses this challenge by using motion sensing instead of video recording. By capturing movements through wearable sensors and reconstructing them as a digital avatar, the system enables more natural behaviour analysis with minimal intrusion.

The system consists of several key components that transform motion data into an animated digital avatar for behavioural analysis.

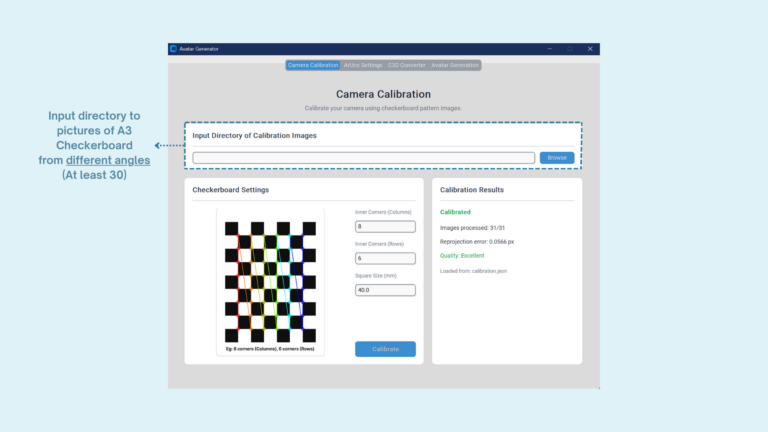

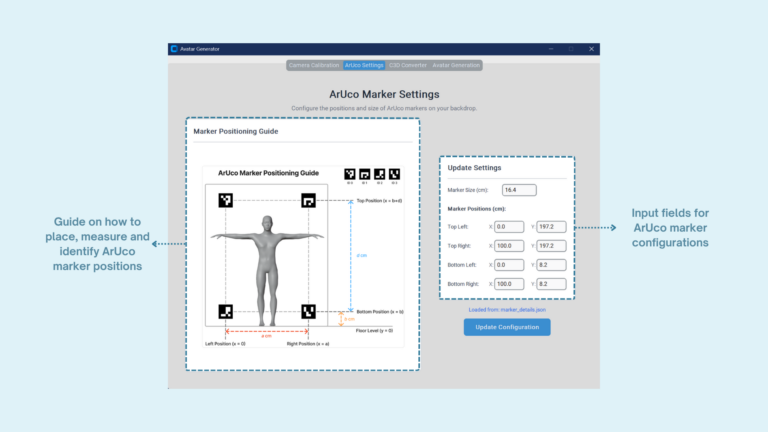

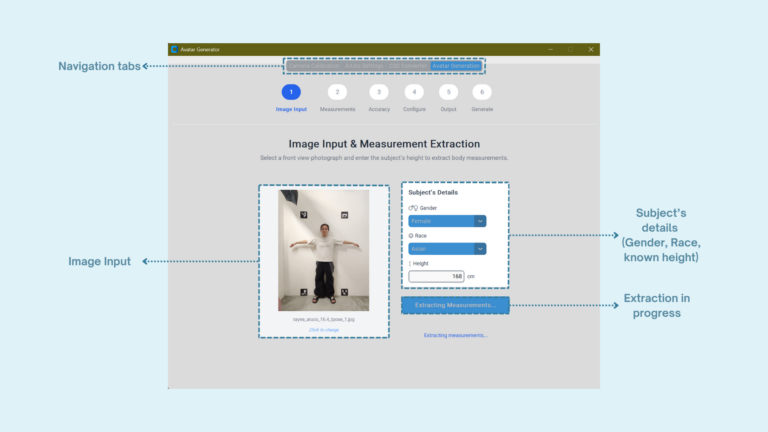

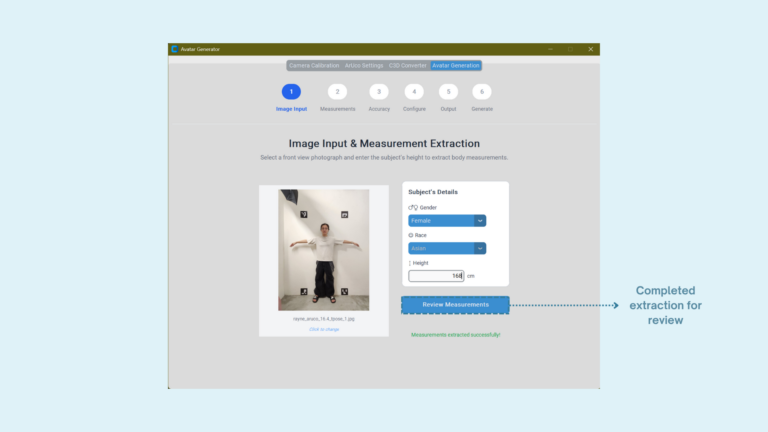

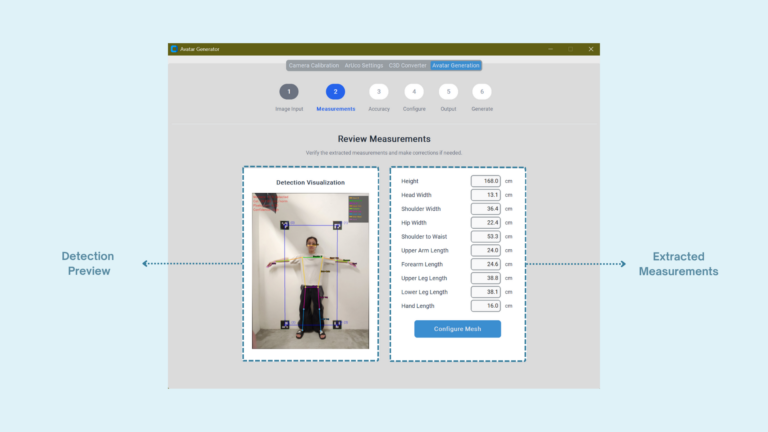

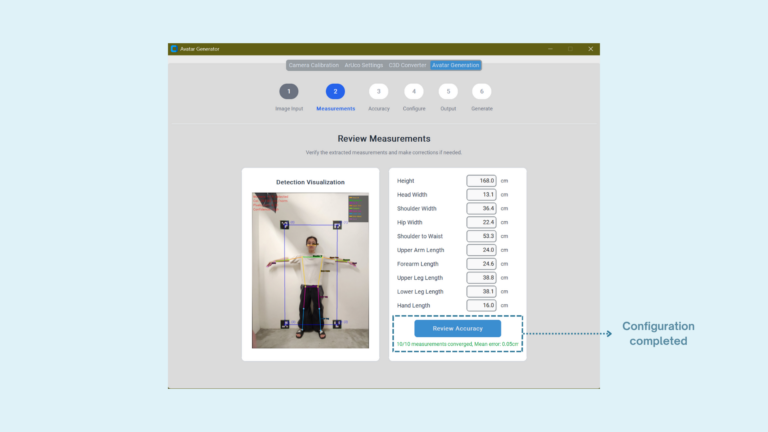

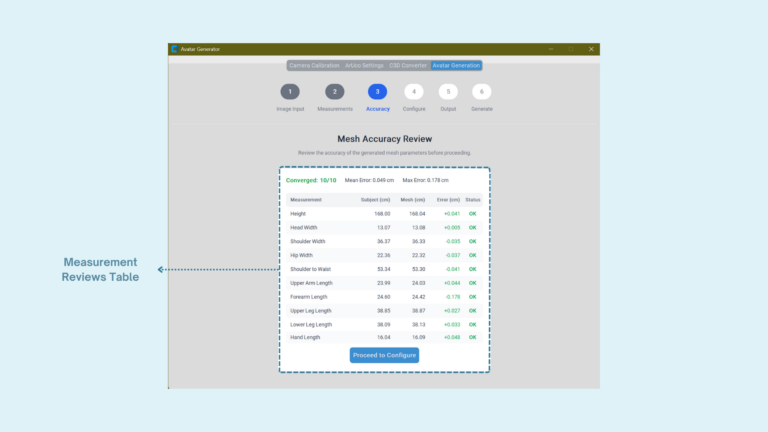

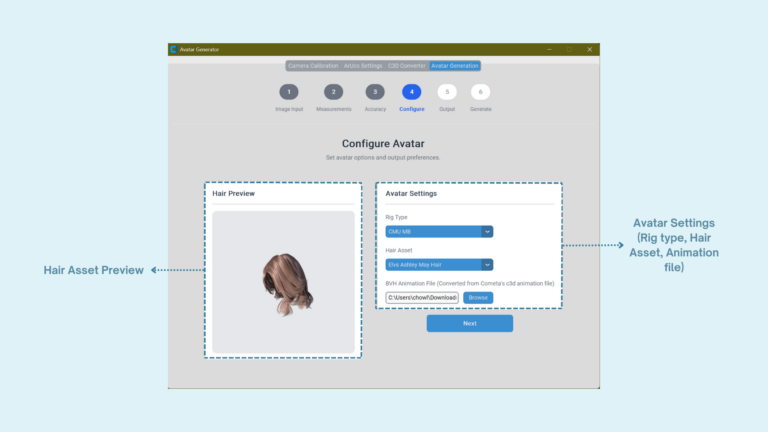

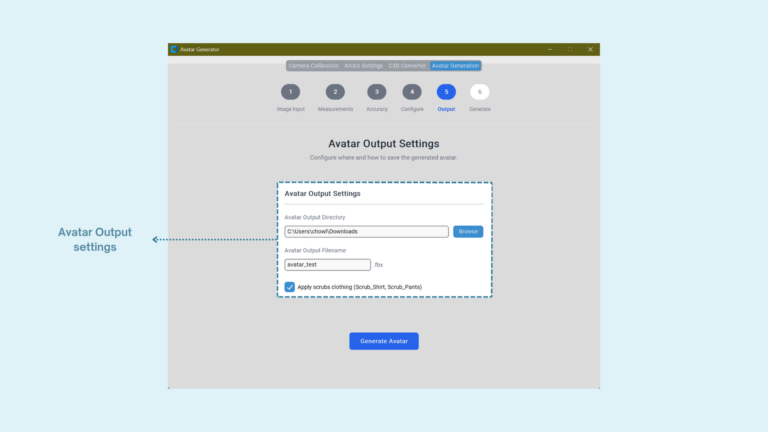

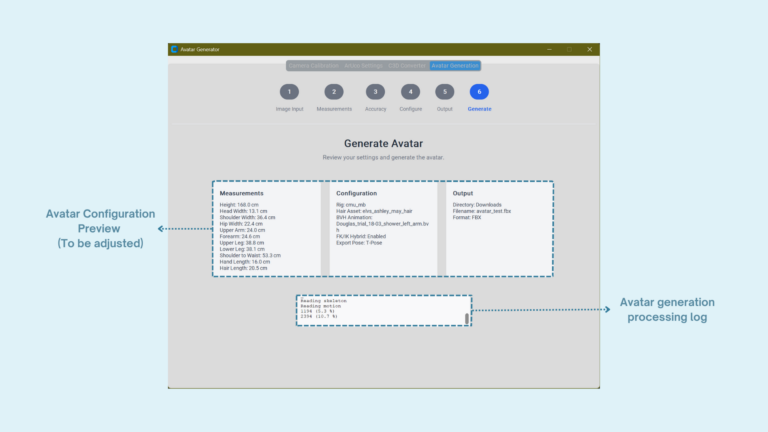

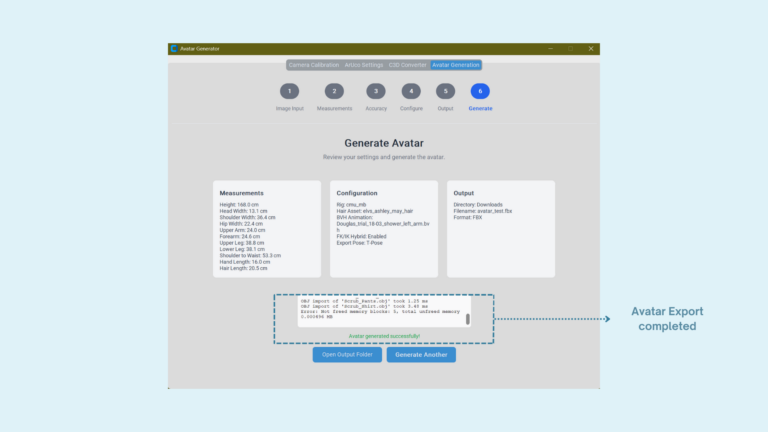

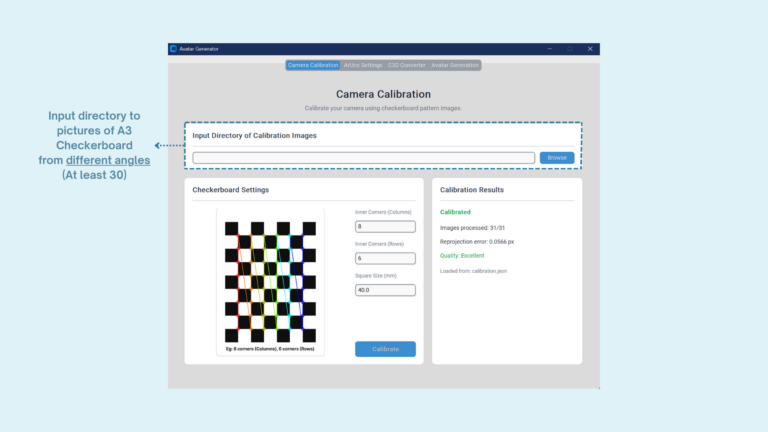

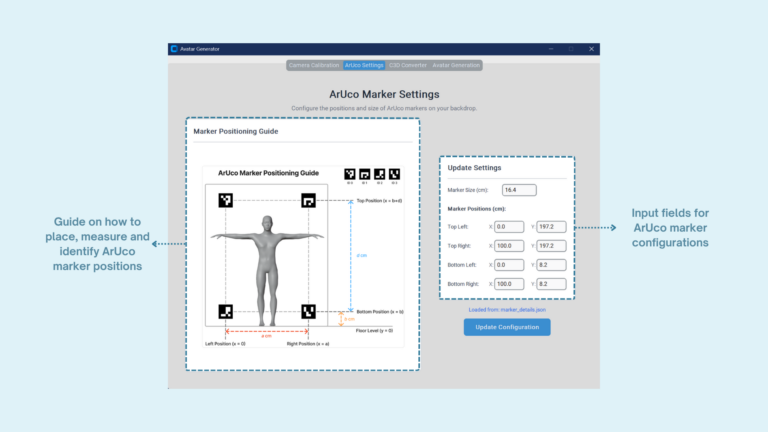

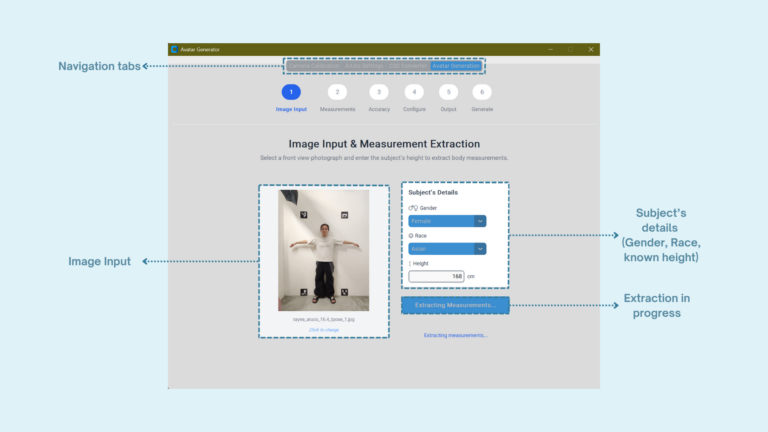

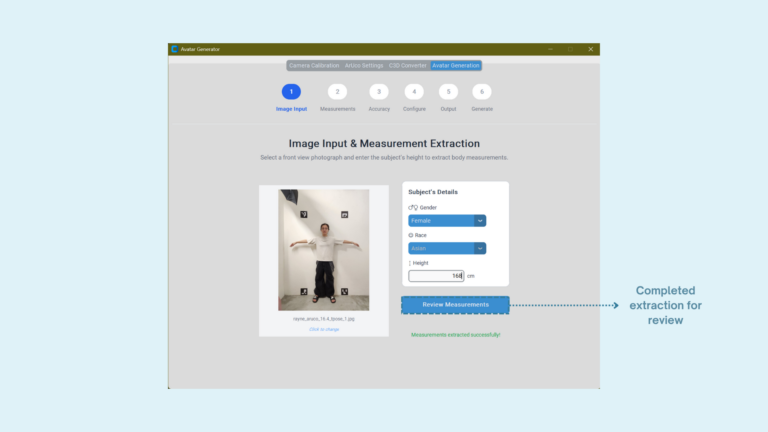

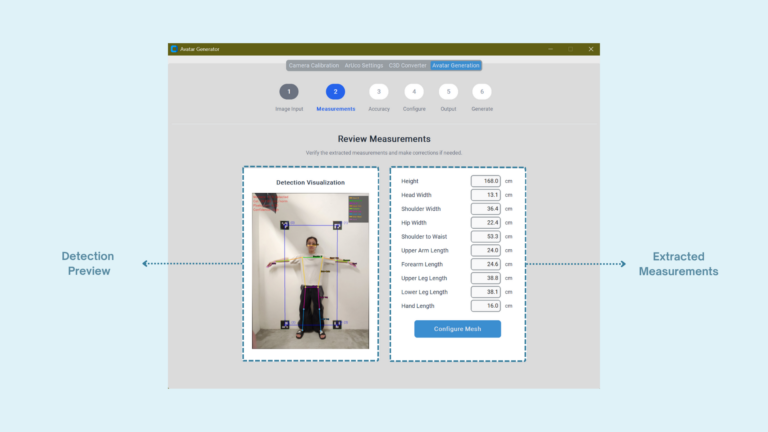

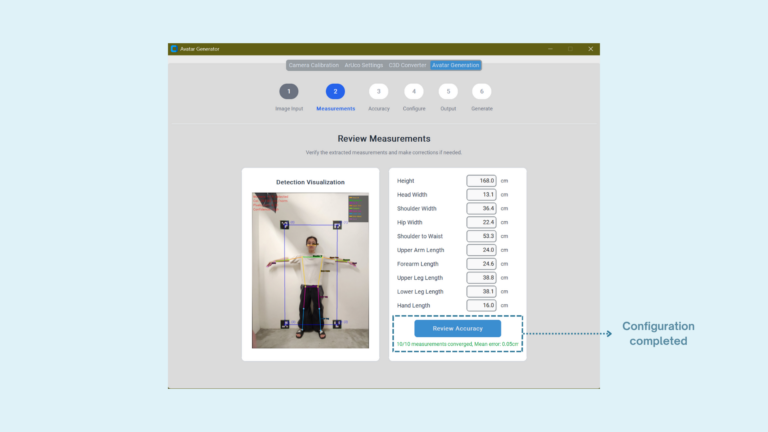

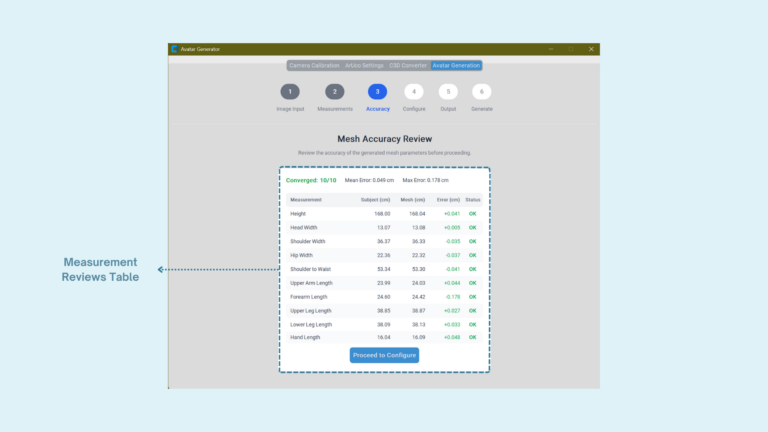

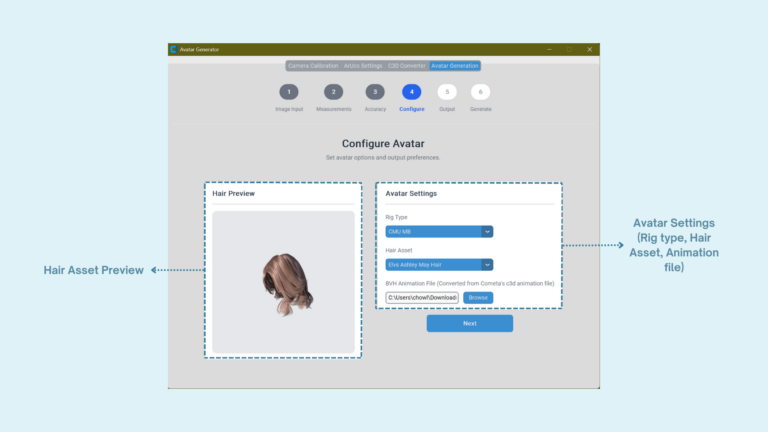

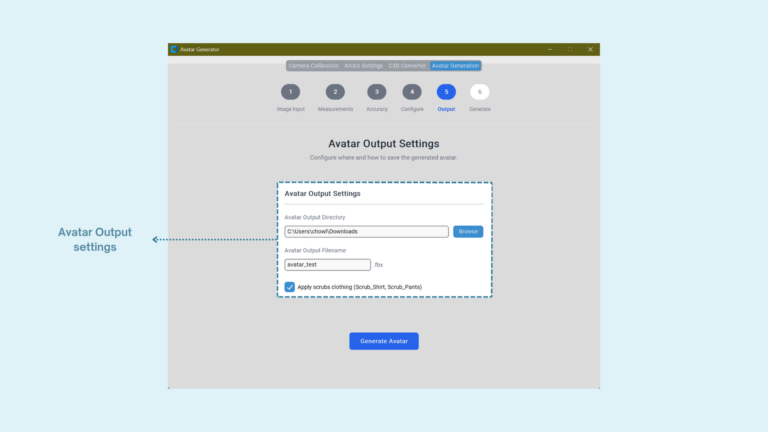

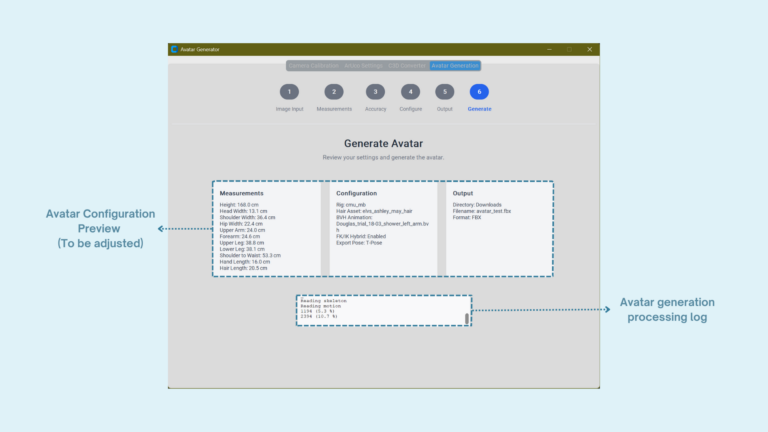

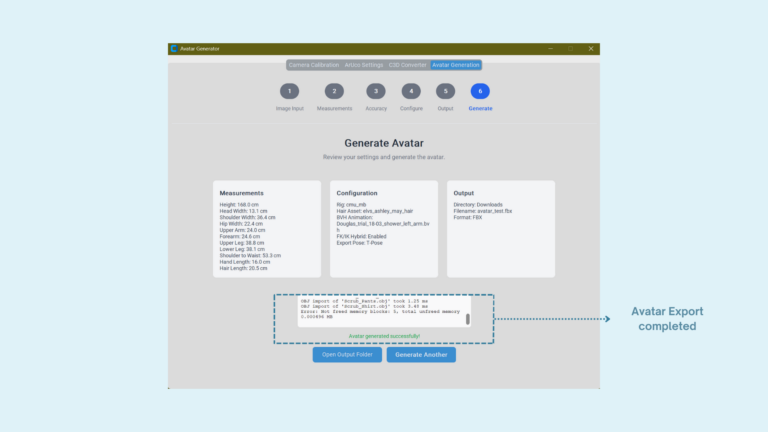

The Avatar Generator creates a personalised 3D avatar using calibration images and measurement extraction. Computer vision techniques detect body features and estimate body parameters, which are then used to generate a rigged avatar with configurable hair assets. This ensures the digital avatar reflects the participant’s body proportions while maintaining anonymity.

Wearable IMU sensors record upper-limb movements during shower routines. The captured motion data is processed and converted into animation files that can be applied to the avatar skeleton. This allows the digital avatar to replicate the participant’s movements without requiring video recording.

The Unreal Client visualises the animated avatar in an interactive environment. Users can preview motion playback, simulate hair physics, and record animations for analysis. This allows researchers to observe realistic shower behaviour while preserving participant privacy.

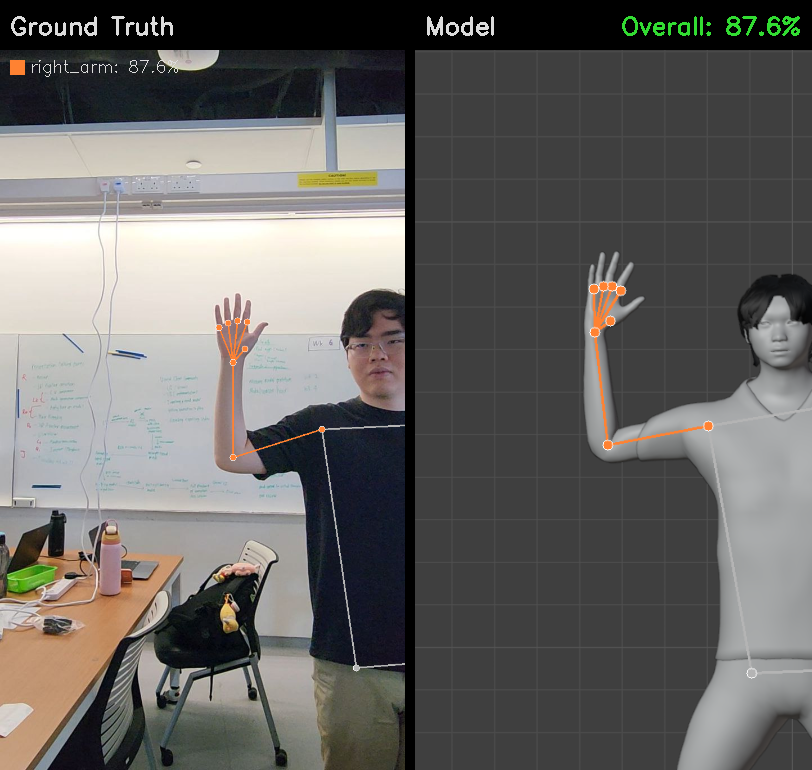

To evaluate the accuracy of our motion reconstruction, we compare the detected joint positions against ground truth keypoints obtained from visual data. The model achieves an overall accuracy of 87.6%, demonstrating its ability to reliably capture upper-limb movements using IMU sensors.

The comparison shows that the reconstructed avatar closely matches the participant’s arm and hand pose, indicating that motion-based sensing can effectively approximate real-world shower behaviour without relying on video recording.

The ShowerSense team would like to express our sincere gratitude to our capstone instructor, Dr Qin Yanxia and Dr Kan Ee May, for their guidance, feedback, and continuous support throughout the development of this project. Their insights were invaluable in shaping both the technical direction and research approach of our work.

We would also like to thank Ms Belinda Seet for her guidance on the communication and documentation of our project.

Special thanks to our industry mentor, Ms Valerie Yong from Procter & Gamble, for providing valuable industry perspectives, project guidance, and support throughout the collaboration.

At Singapore University of Technology and Design (SUTD), we believe that the power of design roots from the understanding of human experiences and needs, to create for innovation that enhances and transforms the way we live. This is why we develop a multi-disciplinary curriculum delivered v ia a hands-on, collaborative learning pedagogy and environment that concludes in a Capstone project.

The Capstone project is a collaboration between companies and senior-year students. Students of different majors come together to work in teams and contribute their technology and design expertise to solve real-world challenges faced by companies. The Capstone project will culminate with a design showcase, unveiling the innovative solutions from the graduating cohort.

The Capstone Design Showcase is held annually to celebrate the success of our graduating students and their enthralling multi-disciplinary projects they have developed.